Compare commits

76 Commits

v2026.01.1

...

v2026.01.2

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

219ba83df3 | ||

|

|

e412aeb93d | ||

|

|

38102ca0c4 | ||

|

|

6ab69fba1c | ||

|

|

e0c0f69dc8 | ||

|

|

7921b14dae | ||

|

|

30cde9e871 | ||

|

|

ac50cd249a | ||

|

|

927db6dbaa | ||

|

|

376c398ac7 | ||

|

|

a167a3cf83 | ||

|

|

c51e7dfdf7 | ||

|

|

1d4d13b34b | ||

|

|

18e8775f38 | ||

|

|

813b019653 | ||

|

|

b0b1542939 | ||

|

|

15f19d8b8d | ||

|

|

82253b114c | ||

|

|

e0bfbf6dd4 | ||

|

|

4689e80e7a | ||

|

|

556e6c1c67 | ||

|

|

3ab84a526d | ||

|

|

bdce96f912 | ||

|

|

4811b99a4b | ||

|

|

fb2a64c07a | ||

|

|

e023e4f2e2 | ||

|

|

0b16b1e0f4 | ||

|

|

59073ad7ac | ||

|

|

8248644c45 | ||

|

|

f38e6394c9 | ||

|

|

0aaa529c6b | ||

|

|

b81a6562a1 | ||

|

|

c5b10db23a | ||

|

|

d16e444643 | ||

|

|

8202468099 | ||

|

|

766e8bd20f | ||

|

|

1214ab5a8c | ||

|

|

ebddbb25f8 | ||

|

|

59545e1110 | ||

|

|

500e090b11 | ||

|

|

a75ee555fa | ||

|

|

6a8c2164cd | ||

|

|

7f7efa325a | ||

|

|

9ba6cb08fc | ||

|

|

1872271a2d | ||

|

|

813b50864a | ||

|

|

b18cefe320 | ||

|

|

a54c359fcf | ||

|

|

8d83221a4a | ||

|

|

1879000720 | ||

|

|

ba92649a98 | ||

|

|

d2276dcaae | ||

|

|

25c9d20f3d | ||

|

|

0d853577df | ||

|

|

f91f3d8692 | ||

|

|

0f7cad8dfa | ||

|

|

db1a1e7ef0 | ||

|

|

e7de80a059 | ||

|

|

0d8c4e048e | ||

|

|

014a5a9d1f | ||

|

|

a6dd970859 | ||

|

|

aac730f5b1 | ||

|

|

ff95d9328e | ||

|

|

afe1d8cf52 | ||

|

|

67b819f3de | ||

|

|

9b6acb6b95 | ||

|

|

a9a59e1e34 | ||

|

|

5b05397356 | ||

|

|

7a7dbc0cfa | ||

|

|

6ac0ba6efe | ||

|

|

d3d008efb4 | ||

|

|

4f1528128a | ||

|

|

93c4326206 | ||

|

|

0fca7fe524 | ||

|

|

afdcab10c6 | ||

|

|

f8cc5eabe6 |

@@ -90,6 +90,9 @@ Reference: `.github/workflows/release.yml`

|

||||

- Action: Automatically updates the plugin code and metadata on OpenWebUI.com using `scripts/publish_plugin.py`.

|

||||

- **Auto-Sync**: If a local plugin has no ID but matches an existing published plugin by **Title**, the script will automatically fetch the ID, update the local file, and proceed with the update.

|

||||

- Requirement: `OPENWEBUI_API_KEY` secret must be set.

|

||||

- **README Link**: When announcing a release, always include the GitHub README URL for the plugin:

|

||||

- Format: `https://github.com/Fu-Jie/awesome-openwebui/blob/main/plugins/{type}/{name}/README.md`

|

||||

- Example: `https://github.com/Fu-Jie/awesome-openwebui/blob/main/plugins/filters/folder-memory/README.md`

|

||||

|

||||

### Pull Request Check

|

||||

- Workflow: `.github/workflows/plugin-version-check.yml`

|

||||

|

||||

116

.github/copilot-instructions.md

vendored

116

.github/copilot-instructions.md

vendored

@@ -62,18 +62,41 @@ plugins/

|

||||

│ ├── ACTION_PLUGIN_TEMPLATE_CN.py # Chinese template

|

||||

│ └── README.md

|

||||

├── filters/ # Filter 插件 (输入处理)

|

||||

│ ├── my_filter/

|

||||

│ │ ├── my_filter.py

|

||||

│ │ ├── 我的过滤器.py

|

||||

│ │ ├── README.md

|

||||

│ │ └── README_CN.md

|

||||

│ └── README.md

|

||||

│ └── ...

|

||||

├── pipes/ # Pipe 插件 (输出处理)

|

||||

│ └── ...

|

||||

└── pipelines/ # Pipeline 插件

|

||||

├── pipelines/ # Pipeline 插件

|

||||

└── ...

|

||||

├── debug/ # 调试与开发工具 (Debug & Development Tools)

|

||||

│ ├── my_debug_tool/

|

||||

│ │ ├── debug_script.py

|

||||

│ │ └── notes.md

|

||||

│ └── ...

|

||||

```

|

||||

|

||||

#### 调试目录规范 (Debug Directory Standards)

|

||||

|

||||

`plugins/debug/` 目录用于存放调试用的脚本、临时验证代码或开发笔记。

|

||||

|

||||

**目录结构 (Directory Structure)**:

|

||||

应根据调试工具所属的插件或功能模块进行子目录分类,而非将文件散落在根目录。

|

||||

|

||||

```

|

||||

plugins/debug/

|

||||

├── my_plugin_name/ # 特定插件的调试文件 (Debug files for specific plugin)

|

||||

│ ├── debug_script.py

|

||||

│ └── guides/

|

||||

├── common_tools/ # 通用调试工具 (General debug tools)

|

||||

│ └── ...

|

||||

└── ...

|

||||

```

|

||||

|

||||

**规范说明 (Guidelines)**:

|

||||

- **不强制要求 README**: 该目录下的子项目不需要包含 `README.md`。

|

||||

- **发布豁免**: 该目录下的内容**绝不会**被发布脚本处理。

|

||||

- **内容灵活性**: 可以包含 Python 脚本、Markdown 文档、JSON 数据等。

|

||||

- **分类存放**: 任何调试产物(如 `test_*.py`, `inspect_*.py`)都不应直接存放在项目根目录,必须移动到此目录下相应的子文件夹中。

|

||||

|

||||

### 3. 文档字符串规范 (Docstring Standard)

|

||||

|

||||

每个插件文件必须以标准化的文档字符串开头:

|

||||

@@ -100,13 +123,14 @@ description: 插件功能的简短描述。Brief description of plugin functiona

|

||||

| `author_url` | 作者主页链接 | `https://github.com/Fu-Jie/awesome-openwebui` |

|

||||

| `funding_url` | 赞助/项目链接 | `https://github.com/open-webui` |

|

||||

| `version` | 语义化版本号 | `0.1.0`, `1.2.3` |

|

||||

| `icon_url` | 图标 (Base64 编码的 SVG) | 见下方图标规范 |

|

||||

| `icon_url` | 图标 (Base64 编码的 SVG) | 仅 Action 插件**必须**提供。其他类型可选。 |

|

||||

| `requirements` | 额外依赖 (仅 OpenWebUI 环境未安装的) | `python-docx==1.1.2` |

|

||||

| `description` | 功能描述 | `将对话导出为 Word 文档` |

|

||||

|

||||

#### 图标规范 (Icon Guidelines)

|

||||

|

||||

- 图标来源:从 [Lucide Icons](https://lucide.dev/icons/) 获取符合插件功能的图标

|

||||

- 适用范围:Action 插件**必须**提供,其他插件可选

|

||||

- 格式:Base64 编码的 SVG

|

||||

- 获取方法:从 Lucide 下载 SVG,然后使用 Base64 编码

|

||||

- 示例格式:

|

||||

@@ -408,6 +432,51 @@ async def long_running_task_with_notification(self, event_emitter, ...):

|

||||

return task_future.result()

|

||||

```

|

||||

|

||||

### 7. 前端数据获取与交互规范 (Frontend Data Access & Interaction)

|

||||

|

||||

#### 获取前端信息 (Retrieving Frontend Info)

|

||||

|

||||

当需要获取用户浏览器的上下文信息(如语言、时区、LocalStorage)时,**必须**使用 `__event_call__` 的 `execute` 类型,而不是通过文件上传或复杂的 API 请求。

|

||||

|

||||

```python

|

||||

async def _get_frontend_value(self, js_code: str) -> str:

|

||||

"""Helper to execute JS and get return value."""

|

||||

try:

|

||||

response = await __event_call__(

|

||||

{

|

||||

"type": "execute",

|

||||

"data": {

|

||||

"code": js_code,

|

||||

},

|

||||

}

|

||||

)

|

||||

return str(response)

|

||||

except Exception as e:

|

||||

logger.error(f"Failed to execute JS: {e}")

|

||||

return ""

|

||||

|

||||

# 示例:获取界面语言 (Get UI Language)

|

||||

async def get_user_language(self):

|

||||

js_code = """

|

||||

return (

|

||||

localStorage.getItem('locale') ||

|

||||

localStorage.getItem('language') ||

|

||||

navigator.language ||

|

||||

'en-US'

|

||||

);

|

||||

"""

|

||||

return await self._get_frontend_value(js_code)

|

||||

```

|

||||

|

||||

#### 适用场景与引导 (Usage Guidelines)

|

||||

|

||||

- **语言适配**: 动态获取界面语言 (`ru-RU`, `zh-CN`) 自动切换输出语言。

|

||||

- **时区处理**: 获取 `Intl.DateTimeFormat().resolvedOptions().timeZone` 处理时间。

|

||||

- **客户端存储**: 读取 `localStorage` 中的用户偏好设置。

|

||||

- **硬件能力**: 获取 `navigator.clipboard` 或 `navigator.geolocation` (需授权)。

|

||||

|

||||

**注意**: 即使插件有 `Valves` 配置,也应优先尝试自动探测,提升用户体验。

|

||||

|

||||

---

|

||||

|

||||

## ⚡ Action 插件规范 (Action Plugin Standards)

|

||||

@@ -788,6 +857,19 @@ Filter 实例是**单例 (Singleton)**。

|

||||

|

||||

---

|

||||

|

||||

## 🧪 测试规范 (Testing Standards)

|

||||

|

||||

### 1. Copilot SDK 测试模型 (Copilot SDK Test Models)

|

||||

|

||||

在编写 Copilot SDK 相关的测试脚本时 (如 `test_injection.py`, `test_capabilities.py` 等),**必须**优先使用以下免费/低成本模型之一,严禁使用高昂费用的模型进行常规测试,除非用户明确要求:

|

||||

|

||||

- `gpt-5-mini` (首选 / Preferred)

|

||||

- `gpt-4.1`

|

||||

|

||||

此规则适用于所有自动化测试脚本和临时验证脚本。

|

||||

|

||||

---

|

||||

|

||||

## 🔄 工作流与流程 (Workflow & Process)

|

||||

|

||||

### 1. ✅ 开发检查清单 (Development Checklist)

|

||||

@@ -822,11 +904,27 @@ Filter 实例是**单例 (Singleton)**。

|

||||

|

||||

#### Commit Message 规范

|

||||

使用 Conventional Commits 格式 (`feat`, `fix`, `docs`, etc.)。

|

||||

**必须**在提交标题与正文中清晰描述变更内容,确保在 Release 页面可读且可追踪。

|

||||

|

||||

要求:

|

||||

- 标题必须包含“做了什么”与影响范围(避免含糊词)。

|

||||

- 正文必须列出关键变更点(1-3 条),与实际改动一一对应。

|

||||

- 若影响用户或插件行为,必须在正文标明影响与迁移说明。

|

||||

|

||||

推荐格式:

|

||||

- `feat(actions): add export settings panel`

|

||||

- `fix(filters): handle empty metadata to avoid crash`

|

||||

- `docs(plugins): update bilingual README structure`

|

||||

|

||||

正文示例:

|

||||

- Add valves for export format selection

|

||||

- Update README/README_CN to include What's New section

|

||||

- Migration: default TITLE_SOURCE changed to chat_title

|

||||

|

||||

### 4. 🤖 Git Operations (Agent Rules)

|

||||

|

||||

- **允许**: 直接推送到 `main` 分支并发布。

|

||||

- **允许**: 创建功能分支 (`feature/xxx`),推送到功能分支。

|

||||

- **禁止**: 直接推送到 `main`,自动合并到 `main`。

|

||||

|

||||

### 5. 🤝 贡献者认可规范 (Contributor Recognition)

|

||||

|

||||

|

||||

1

.github/workflows/publish_plugin.yml

vendored

1

.github/workflows/publish_plugin.yml

vendored

@@ -6,6 +6,7 @@ on:

|

||||

- main

|

||||

paths:

|

||||

- 'plugins/**/*.py'

|

||||

- '!plugins/debug/**'

|

||||

release:

|

||||

types: [published]

|

||||

workflow_dispatch:

|

||||

|

||||

46

.github/workflows/release.yml

vendored

46

.github/workflows/release.yml

vendored

@@ -246,6 +246,52 @@ jobs:

|

||||

echo "=== Collected Files ==="

|

||||

find release_plugins -name "*.py" -type f | head -20

|

||||

|

||||

- name: Update plugin icon URLs

|

||||

run: |

|

||||

echo "Updating icon_url in plugins to use absolute GitHub URLs..."

|

||||

# Base URL for raw content using the release tag

|

||||

REPO_URL="https://raw.githubusercontent.com/${{ github.repository }}/${{ steps.version.outputs.version }}"

|

||||

|

||||

find release_plugins -name "*.py" | while read -r file; do

|

||||

# $file is like release_plugins/plugins/actions/infographic/infographic.py

|

||||

# Remove release_plugins/ prefix to get the path in the repo

|

||||

src_file="${file#release_plugins/}"

|

||||

src_dir=$(dirname "$src_file")

|

||||

base_name=$(basename "$src_file" .py)

|

||||

|

||||

# Check if a corresponding png exists in the source repository

|

||||

png_file="${src_dir}/${base_name}.png"

|

||||

|

||||

if [ -f "$png_file" ]; then

|

||||

echo "Found icon for $src_file: $png_file"

|

||||

TARGET_ICON_URL="${REPO_URL}/${png_file}"

|

||||

|

||||

# Use python for safe replacement

|

||||

python3 -c "

|

||||

import sys

|

||||

import re

|

||||

|

||||

file_path = '$file'

|

||||

icon_url = '$TARGET_ICON_URL'

|

||||

|

||||

try:

|

||||

with open(file_path, 'r', encoding='utf-8') as f:

|

||||

content = f.read()

|

||||

|

||||

# Replace icon_url: ... with new url

|

||||

# Matches 'icon_url: ...' and replaces it

|

||||

new_content = re.sub(r'^icon_url:.*$', f'icon_url: {icon_url}', content, flags=re.MULTILINE)

|

||||

|

||||

with open(file_path, 'w', encoding='utf-8') as f:

|

||||

f.write(new_content)

|

||||

print(f'Successfully updated icon_url in {file_path}')

|

||||

except Exception as e:

|

||||

print(f'Error updating {file_path}: {e}', file=sys.stderr)

|

||||

sys.exit(1)

|

||||

"

|

||||

fi

|

||||

done

|

||||

|

||||

- name: Debug Filenames

|

||||

run: |

|

||||

python3 -c "import sys; print(f'Filesystem encoding: {sys.getfilesystemencoding()}')"

|

||||

|

||||

29

README.md

29

README.md

@@ -10,28 +10,28 @@ A collection of enhancements, plugins, and prompts for [OpenWebUI](https://githu

|

||||

<!-- STATS_START -->

|

||||

## 📊 Community Stats

|

||||

|

||||

> 🕐 Auto-updated: 2026-01-18 00:08

|

||||

> 🕐 Auto-updated: 2026-01-28 01:12

|

||||

|

||||

| 👤 Author | 👥 Followers | ⭐ Points | 🏆 Contributions |

|

||||

|:---:|:---:|:---:|:---:|

|

||||

| [Fu-Jie](https://openwebui.com/u/Fu-Jie) | **119** | **113** | **25** |

|

||||

| [Fu-Jie](https://openwebui.com/u/Fu-Jie) | **165** | **157** | **34** |

|

||||

|

||||

| 📝 Posts | ⬇️ Downloads | 👁️ Views | 👍 Upvotes | 💾 Saves |

|

||||

|:---:|:---:|:---:|:---:|:---:|

|

||||

| **16** | **1659** | **19990** | **99** | **126** |

|

||||

| **19** | **2501** | **29277** | **141** | **197** |

|

||||

|

||||

### 🔥 Top 6 Popular Plugins

|

||||

|

||||

> 🕐 Auto-updated: 2026-01-18 00:08

|

||||

> 🕐 Auto-updated: 2026-01-28 01:12

|

||||

|

||||

| Rank | Plugin | Version | Downloads | Views | Updated |

|

||||

|:---:|------|:---:|:---:|:---:|:---:|

|

||||

| 🥇 | [Smart Mind Map](https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a) | 0.9.1 | 507 | 4641 | 2026-01-17 |

|

||||

| 🥈 | [📊 Smart Infographic (AntV)](https://openwebui.com/posts/smart_infographic_ad6f0c7f) | 1.4.9 | 228 | 2285 | 2026-01-17 |

|

||||

| 🥉 | [Export to Excel](https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d) | 0.3.7 | 202 | 751 | 2026-01-07 |

|

||||

| 4️⃣ | [Async Context Compression](https://openwebui.com/posts/async_context_compression_b1655bc8) | 1.1.3 | 174 | 1906 | 2026-01-17 |

|

||||

| 5️⃣ | [Export to Word (Enhanced)](https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315) | 0.4.3 | 134 | 1255 | 2026-01-17 |

|

||||

| 6️⃣ | [Flash Card](https://openwebui.com/posts/flash_card_65a2ea8f) | 0.2.4 | 132 | 2269 | 2026-01-17 |

|

||||

| 🥇 | [Smart Mind Map](https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a) | 0.9.1 | 652 | 5828 | 2026-01-17 |

|

||||

| 🥈 | [Smart Infographic](https://openwebui.com/posts/smart_infographic_ad6f0c7f) | 1.4.9 | 434 | 3928 | 2026-01-25 |

|

||||

| 🥉 | [Export to Excel](https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d) | 0.3.7 | 263 | 1082 | 2026-01-07 |

|

||||

| 4️⃣ | [Export to Word (Enhanced)](https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315) | 0.4.3 | 238 | 1916 | 2026-01-17 |

|

||||

| 5️⃣ | [Async Context Compression](https://openwebui.com/posts/async_context_compression_b1655bc8) | 1.2.2 | 236 | 2557 | 2026-01-21 |

|

||||

| 6️⃣ | [Flash Card](https://openwebui.com/posts/flash_card_65a2ea8f) | 0.2.4 | 176 | 2769 | 2026-01-17 |

|

||||

|

||||

*See full stats in [Community Stats Report](./docs/community-stats.md)*

|

||||

<!-- STATS_END -->

|

||||

@@ -43,6 +43,7 @@ A collection of enhancements, plugins, and prompts for [OpenWebUI](https://githu

|

||||

Located in the `plugins/` directory, containing Python-based enhancements:

|

||||

|

||||

#### Actions

|

||||

|

||||

- **Smart Mind Map** (`smart-mind-map`): Generates interactive mind maps from text.

|

||||

- **Smart Infographic** (`infographic`): Transforms text into professional infographics using AntV.

|

||||

- **Flash Card** (`flash-card`): Quickly generates beautiful flashcards for learning.

|

||||

@@ -51,11 +52,18 @@ Located in the `plugins/` directory, containing Python-based enhancements:

|

||||

- **Export to Word** (`export_to_docx`): Exports chat history to Word documents.

|

||||

|

||||

#### Filters

|

||||

|

||||

- **Async Context Compression** (`async-context-compression`): Optimizes token usage via context compression.

|

||||

- **Context Enhancement** (`context_enhancement_filter`): Enhances chat context.

|

||||

- **Folder Memory** (`folder-memory`): Automatically extracts project rules from conversations and injects them into the folder's system prompt.

|

||||

- **Markdown Normalizer** (`markdown_normalizer`): Fixes common Markdown formatting issues in LLM outputs.

|

||||

|

||||

#### Pipes

|

||||

|

||||

- **GitHub Copilot SDK** (`github-copilot-sdk`): Official GitHub Copilot SDK integration. Supports dynamic models, multi-turn conversation, streaming, multimodal input, and infinite sessions.

|

||||

|

||||

#### Pipelines

|

||||

|

||||

- **MoE Prompt Refiner** (`moe_prompt_refiner`): Refines prompts for Mixture of Experts (MoE) summary requests to generate high-quality comprehensive reports.

|

||||

|

||||

### 🎯 Prompts

|

||||

@@ -100,6 +108,7 @@ This project is a collection of resources and does not require a Python environm

|

||||

### Contributing

|

||||

|

||||

If you have great prompts or plugins to share:

|

||||

|

||||

1. Fork this repository.

|

||||

2. Add your files to the appropriate `prompts/` or `plugins/` directory.

|

||||

3. Submit a Pull Request.

|

||||

|

||||

27

README_CN.md

27

README_CN.md

@@ -7,28 +7,28 @@ OpenWebUI 增强功能集合。包含个人开发与收集的插件、提示词

|

||||

<!-- STATS_START -->

|

||||

## 📊 社区统计

|

||||

|

||||

> 🕐 自动更新于 2026-01-18 00:08

|

||||

> 🕐 自动更新于 2026-01-28 01:12

|

||||

|

||||

| 👤 作者 | 👥 粉丝 | ⭐ 积分 | 🏆 贡献 |

|

||||

|:---:|:---:|:---:|:---:|

|

||||

| [Fu-Jie](https://openwebui.com/u/Fu-Jie) | **119** | **113** | **25** |

|

||||

| [Fu-Jie](https://openwebui.com/u/Fu-Jie) | **165** | **157** | **34** |

|

||||

|

||||

| 📝 发布 | ⬇️ 下载 | 👁️ 浏览 | 👍 点赞 | 💾 收藏 |

|

||||

|:---:|:---:|:---:|:---:|:---:|

|

||||

| **16** | **1659** | **19990** | **99** | **126** |

|

||||

| **19** | **2501** | **29277** | **141** | **197** |

|

||||

|

||||

### 🔥 热门插件 Top 6

|

||||

|

||||

> 🕐 自动更新于 2026-01-18 00:08

|

||||

> 🕐 自动更新于 2026-01-28 01:12

|

||||

|

||||

| 排名 | 插件 | 版本 | 下载 | 浏览 | 更新日期 |

|

||||

|:---:|------|:---:|:---:|:---:|:---:|

|

||||

| 🥇 | [Smart Mind Map](https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a) | 0.9.1 | 507 | 4641 | 2026-01-17 |

|

||||

| 🥈 | [📊 Smart Infographic (AntV)](https://openwebui.com/posts/smart_infographic_ad6f0c7f) | 1.4.9 | 228 | 2285 | 2026-01-17 |

|

||||

| 🥉 | [Export to Excel](https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d) | 0.3.7 | 202 | 751 | 2026-01-07 |

|

||||

| 4️⃣ | [Async Context Compression](https://openwebui.com/posts/async_context_compression_b1655bc8) | 1.1.3 | 174 | 1906 | 2026-01-17 |

|

||||

| 5️⃣ | [Export to Word (Enhanced)](https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315) | 0.4.3 | 134 | 1255 | 2026-01-17 |

|

||||

| 6️⃣ | [Flash Card](https://openwebui.com/posts/flash_card_65a2ea8f) | 0.2.4 | 132 | 2269 | 2026-01-17 |

|

||||

| 🥇 | [Smart Mind Map](https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a) | 0.9.1 | 652 | 5828 | 2026-01-17 |

|

||||

| 🥈 | [Smart Infographic](https://openwebui.com/posts/smart_infographic_ad6f0c7f) | 1.4.9 | 434 | 3928 | 2026-01-25 |

|

||||

| 🥉 | [Export to Excel](https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d) | 0.3.7 | 263 | 1082 | 2026-01-07 |

|

||||

| 4️⃣ | [Export to Word (Enhanced)](https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315) | 0.4.3 | 238 | 1916 | 2026-01-17 |

|

||||

| 5️⃣ | [Async Context Compression](https://openwebui.com/posts/async_context_compression_b1655bc8) | 1.2.2 | 236 | 2557 | 2026-01-21 |

|

||||

| 6️⃣ | [Flash Card](https://openwebui.com/posts/flash_card_65a2ea8f) | 0.2.4 | 176 | 2769 | 2026-01-17 |

|

||||

|

||||

*完整统计请查看 [社区统计报告](./docs/community-stats.zh.md)*

|

||||

<!-- STATS_END -->

|

||||

@@ -40,6 +40,7 @@ OpenWebUI 增强功能集合。包含个人开发与收集的插件、提示词

|

||||

位于 `plugins/` 目录,包含各类 Python 编写的功能增强插件:

|

||||

|

||||

#### Actions (交互增强)

|

||||

|

||||

- **Smart Mind Map** (`smart-mind-map`): 智能分析文本并生成交互式思维导图。

|

||||

- **Smart Infographic** (`infographic`): 基于 AntV 的智能信息图生成工具。

|

||||

- **Flash Card** (`flash-card`): 快速生成精美的学习记忆卡片。

|

||||

@@ -48,17 +49,22 @@ OpenWebUI 增强功能集合。包含个人开发与收集的插件、提示词

|

||||

- **Export to Word** (`export_to_docx`): 将对话内容导出为 Word 文档。

|

||||

|

||||

#### Filters (消息处理)

|

||||

|

||||

- **Async Context Compression** (`async-context-compression`): 异步上下文压缩,优化 Token 使用。

|

||||

- **Context Enhancement** (`context_enhancement_filter`): 上下文增强过滤器。

|

||||

- **Folder Memory** (`folder-memory`): 自动从对话中提取项目规则并注入到文件夹系统提示词中。

|

||||

- **Gemini Manifold Companion** (`gemini_manifold_companion`): Gemini Manifold 配套增强。

|

||||

- **Gemini Multimodal Filter** (`web_gemini_multimodel_filter`): 为任意模型提供多模态能力(PDF、Office、视频等),支持智能路由和字幕精修。

|

||||

- **Markdown Normalizer** (`markdown_normalizer`): 修复 LLM 输出中常见的 Markdown 格式问题。

|

||||

- **Multi-Model Context Merger** (`multi_model_context_merger`): 自动合并并注入多模型回答的上下文。

|

||||

|

||||

#### Pipes (模型管道)

|

||||

|

||||

- **GitHub Copilot SDK** (`github-copilot-sdk`): GitHub Copilot SDK 官方集成。支持动态模型、多轮对话、流式输出、图片输入及无限会话。

|

||||

- **Gemini Manifold** (`gemini_mainfold`): 集成 Gemini 模型的管道。

|

||||

|

||||

#### Pipelines (工作流管道)

|

||||

|

||||

- **MoE Prompt Refiner** (`moe_prompt_refiner`): 优化多模型 (MoE) 汇总请求的提示词,生成高质量的综合报告。

|

||||

|

||||

### 🎯 提示词 (Prompts)

|

||||

@@ -106,6 +112,7 @@ OpenWebUI 增强功能集合。包含个人开发与收集的插件、提示词

|

||||

### 贡献代码

|

||||

|

||||

如果你有优质的提示词或插件想要分享:

|

||||

|

||||

1. Fork 本仓库。

|

||||

2. 将你的文件添加到对应的 `prompts/` 或 `plugins/` 目录。

|

||||

3. 提交 Pull Request。

|

||||

|

||||

@@ -1,7 +1,7 @@

|

||||

{

|

||||

"schemaVersion": 1,

|

||||

"label": "downloads",

|

||||

"message": "1.7k",

|

||||

"message": "2.5k",

|

||||

"color": "blue",

|

||||

"namedLogo": "openwebui"

|

||||

}

|

||||

@@ -1,6 +1,6 @@

|

||||

{

|

||||

"schemaVersion": 1,

|

||||

"label": "followers",

|

||||

"message": "119",

|

||||

"message": "165",

|

||||

"color": "blue"

|

||||

}

|

||||

@@ -1,6 +1,6 @@

|

||||

{

|

||||

"schemaVersion": 1,

|

||||

"label": "plugins",

|

||||

"message": "16",

|

||||

"message": "19",

|

||||

"color": "green"

|

||||

}

|

||||

@@ -1,6 +1,6 @@

|

||||

{

|

||||

"schemaVersion": 1,

|

||||

"label": "points",

|

||||

"message": "113",

|

||||

"message": "157",

|

||||

"color": "orange"

|

||||

}

|

||||

@@ -1,6 +1,6 @@

|

||||

{

|

||||

"schemaVersion": 1,

|

||||

"label": "upvotes",

|

||||

"message": "99",

|

||||

"message": "141",

|

||||

"color": "brightgreen"

|

||||

}

|

||||

@@ -1,14 +1,16 @@

|

||||

{

|

||||

"total_posts": 16,

|

||||

"total_downloads": 1659,

|

||||

"total_views": 19990,

|

||||

"total_upvotes": 99,

|

||||

"total_posts": 19,

|

||||

"total_downloads": 2501,

|

||||

"total_views": 29277,

|

||||

"total_upvotes": 141,

|

||||

"total_downvotes": 2,

|

||||

"total_saves": 126,

|

||||

"total_comments": 23,

|

||||

"total_saves": 197,

|

||||

"total_comments": 38,

|

||||

"by_type": {

|

||||

"pipe": 1,

|

||||

"action": 14,

|

||||

"unknown": 2

|

||||

"unknown": 3,

|

||||

"filter": 1

|

||||

},

|

||||

"posts": [

|

||||

{

|

||||

@@ -18,29 +20,29 @@

|

||||

"version": "0.9.1",

|

||||

"author": "Fu-Jie",

|

||||

"description": "Intelligently analyzes text content and generates interactive mind maps to help users structure and visualize knowledge.",

|

||||

"downloads": 507,

|

||||

"views": 4641,

|

||||

"upvotes": 14,

|

||||

"saves": 28,

|

||||

"downloads": 652,

|

||||

"views": 5828,

|

||||

"upvotes": 17,

|

||||

"saves": 38,

|

||||

"comments": 11,

|

||||

"created_at": "2025-12-30",

|

||||

"updated_at": "2026-01-17",

|

||||

"url": "https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a"

|

||||

},

|

||||

{

|

||||

"title": "📊 Smart Infographic (AntV)",

|

||||

"title": "Smart Infographic",

|

||||

"slug": "smart_infographic_ad6f0c7f",

|

||||

"type": "action",

|

||||

"version": "1.4.9",

|

||||

"author": "Fu-Jie",

|

||||

"description": "AI-powered infographic generator based on AntV Infographic. Supports professional templates, auto-icon matching, and SVG/PNG downloads.",

|

||||

"downloads": 228,

|

||||

"views": 2285,

|

||||

"upvotes": 11,

|

||||

"saves": 16,

|

||||

"comments": 2,

|

||||

"downloads": 434,

|

||||

"views": 3928,

|

||||

"upvotes": 18,

|

||||

"saves": 28,

|

||||

"comments": 10,

|

||||

"created_at": "2025-12-28",

|

||||

"updated_at": "2026-01-17",

|

||||

"updated_at": "2026-01-25",

|

||||

"url": "https://openwebui.com/posts/smart_infographic_ad6f0c7f"

|

||||

},

|

||||

{

|

||||

@@ -50,31 +52,15 @@

|

||||

"version": "0.3.7",

|

||||

"author": "Fu-Jie",

|

||||

"description": "Extracts tables from chat messages and exports them to Excel (.xlsx) files with smart formatting.",

|

||||

"downloads": 202,

|

||||

"views": 751,

|

||||

"upvotes": 3,

|

||||

"saves": 5,

|

||||

"downloads": 263,

|

||||

"views": 1082,

|

||||

"upvotes": 4,

|

||||

"saves": 6,

|

||||

"comments": 0,

|

||||

"created_at": "2025-05-30",

|

||||

"updated_at": "2026-01-07",

|

||||

"url": "https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d"

|

||||

},

|

||||

{

|

||||

"title": "Async Context Compression",

|

||||

"slug": "async_context_compression_b1655bc8",

|

||||

"type": "action",

|

||||

"version": "1.1.3",

|

||||

"author": "Fu-Jie",

|

||||

"description": "Reduces token consumption in long conversations while maintaining coherence through intelligent summarization and message compression.",

|

||||

"downloads": 174,

|

||||

"views": 1906,

|

||||

"upvotes": 7,

|

||||

"saves": 18,

|

||||

"comments": 0,

|

||||

"created_at": "2025-11-08",

|

||||

"updated_at": "2026-01-17",

|

||||

"url": "https://openwebui.com/posts/async_context_compression_b1655bc8"

|

||||

},

|

||||

{

|

||||

"title": "Export to Word (Enhanced)",

|

||||

"slug": "export_to_word_enhanced_formatting_fca6a315",

|

||||

@@ -82,15 +68,31 @@

|

||||

"version": "0.4.3",

|

||||

"author": "Fu-Jie",

|

||||

"description": "Export current conversation from Markdown to Word (.docx) with Mermaid diagrams rendered client-side (Mermaid.js, SVG+PNG), LaTeX math, real hyperlinks, improved tables, syntax highlighting, and blockquote support.",

|

||||

"downloads": 134,

|

||||

"views": 1255,

|

||||

"upvotes": 6,

|

||||

"saves": 15,

|

||||

"downloads": 238,

|

||||

"views": 1916,

|

||||

"upvotes": 8,

|

||||

"saves": 21,

|

||||

"comments": 0,

|

||||

"created_at": "2026-01-03",

|

||||

"updated_at": "2026-01-17",

|

||||

"url": "https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315"

|

||||

},

|

||||

{

|

||||

"title": "Async Context Compression",

|

||||

"slug": "async_context_compression_b1655bc8",

|

||||

"type": "action",

|

||||

"version": "1.2.2",

|

||||

"author": "Fu-Jie",

|

||||

"description": "Reduces token consumption in long conversations while maintaining coherence through intelligent summarization and message compression.",

|

||||

"downloads": 236,

|

||||

"views": 2557,

|

||||

"upvotes": 9,

|

||||

"saves": 27,

|

||||

"comments": 0,

|

||||

"created_at": "2025-11-08",

|

||||

"updated_at": "2026-01-21",

|

||||

"url": "https://openwebui.com/posts/async_context_compression_b1655bc8"

|

||||

},

|

||||

{

|

||||

"title": "Flash Card",

|

||||

"slug": "flash_card_65a2ea8f",

|

||||

@@ -98,10 +100,10 @@

|

||||

"version": "0.2.4",

|

||||

"author": "Fu-Jie",

|

||||

"description": "Quickly generates beautiful flashcards from text, extracting key points and categories.",

|

||||

"downloads": 132,

|

||||

"views": 2269,

|

||||

"upvotes": 8,

|

||||

"saves": 10,

|

||||

"downloads": 176,

|

||||

"views": 2769,

|

||||

"upvotes": 11,

|

||||

"saves": 13,

|

||||

"comments": 2,

|

||||

"created_at": "2025-12-30",

|

||||

"updated_at": "2026-01-17",

|

||||

@@ -111,34 +113,18 @@

|

||||

"title": "Markdown Normalizer",

|

||||

"slug": "markdown_normalizer_baaa8732",

|

||||

"type": "action",

|

||||

"version": "1.2.2",

|

||||

"version": "1.2.4",

|

||||

"author": "Fu-Jie",

|

||||

"description": "A content normalizer filter that fixes common Markdown formatting issues in LLM outputs, such as broken code blocks, LaTeX formulas, and list formatting.",

|

||||

"downloads": 63,

|

||||

"views": 1746,

|

||||

"upvotes": 9,

|

||||

"saves": 15,

|

||||

"downloads": 160,

|

||||

"views": 2884,

|

||||

"upvotes": 10,

|

||||

"saves": 22,

|

||||

"comments": 5,

|

||||

"created_at": "2026-01-12",

|

||||

"updated_at": "2026-01-17",

|

||||

"updated_at": "2026-01-19",

|

||||

"url": "https://openwebui.com/posts/markdown_normalizer_baaa8732"

|

||||

},

|

||||

{

|

||||

"title": "导出为 Word (增强版)",

|

||||

"slug": "导出为_word_支持公式流程图表格和代码块_8a6306c0",

|

||||

"type": "action",

|

||||

"version": "0.4.3",

|

||||

"author": "Fu-Jie",

|

||||

"description": "将对话导出为 Word (.docx),支持 Mermaid 图表 (客户端渲染 SVG+PNG)、LaTeX 数学公式、真实超链接、增强表格格式、代码高亮和引用块。",

|

||||

"downloads": 62,

|

||||

"views": 1262,

|

||||

"upvotes": 10,

|

||||

"saves": 3,

|

||||

"comments": 1,

|

||||

"created_at": "2026-01-04",

|

||||

"updated_at": "2026-01-17",

|

||||

"url": "https://openwebui.com/posts/导出为_word_支持公式流程图表格和代码块_8a6306c0"

|

||||

},

|

||||

{

|

||||

"title": "Deep Dive",

|

||||

"slug": "deep_dive_c0b846e4",

|

||||

@@ -146,15 +132,31 @@

|

||||

"version": "1.0.0",

|

||||

"author": "Fu-Jie",

|

||||

"description": "A comprehensive thinking lens that dives deep into any content - from context to logic, insights, and action paths.",

|

||||

"downloads": 58,

|

||||

"views": 605,

|

||||

"upvotes": 3,

|

||||

"saves": 5,

|

||||

"downloads": 94,

|

||||

"views": 863,

|

||||

"upvotes": 4,

|

||||

"saves": 8,

|

||||

"comments": 0,

|

||||

"created_at": "2026-01-08",

|

||||

"updated_at": "2026-01-08",

|

||||

"url": "https://openwebui.com/posts/deep_dive_c0b846e4"

|

||||

},

|

||||

{

|

||||

"title": "导出为 Word (增强版)",

|

||||

"slug": "导出为_word_支持公式流程图表格和代码块_8a6306c0",

|

||||

"type": "action",

|

||||

"version": "0.4.3",

|

||||

"author": "Fu-Jie",

|

||||

"description": "将对话导出为 Word (.docx),支持 Mermaid 图表 (客户端渲染 SVG+PNG)、LaTeX 数学公式、真实超链接、增强表格格式、代码高亮和引用块。",

|

||||

"downloads": 89,

|

||||

"views": 1689,

|

||||

"upvotes": 11,

|

||||

"saves": 4,

|

||||

"comments": 4,

|

||||

"created_at": "2026-01-04",

|

||||

"updated_at": "2026-01-17",

|

||||

"url": "https://openwebui.com/posts/导出为_word_支持公式流程图表格和代码块_8a6306c0"

|

||||

},

|

||||

{

|

||||

"title": "📊 智能信息图 (AntV Infographic)",

|

||||

"slug": "智能信息图_e04a48ff",

|

||||

@@ -162,15 +164,31 @@

|

||||

"version": "1.4.9",

|

||||

"author": "Fu-Jie",

|

||||

"description": "基于 AntV Infographic 的智能信息图生成插件。支持多种专业模板,自动图标匹配,并提供 SVG/PNG 下载功能。",

|

||||

"downloads": 41,

|

||||

"views": 654,

|

||||

"upvotes": 5,

|

||||

"downloads": 47,

|

||||

"views": 799,

|

||||

"upvotes": 6,

|

||||

"saves": 0,

|

||||

"comments": 0,

|

||||

"created_at": "2025-12-28",

|

||||

"updated_at": "2026-01-17",

|

||||

"url": "https://openwebui.com/posts/智能信息图_e04a48ff"

|

||||

},

|

||||

{

|

||||

"title": "📂 Folder Memory – Auto-Evolving Project Context",

|

||||

"slug": "folder_memory_auto_evolving_project_context_4a9875b2",

|

||||

"type": "filter",

|

||||

"version": "0.1.0",

|

||||

"author": "Fu-Jie",

|

||||

"description": "Automatically extracts project rules from conversations and injects them into the folder's system prompt.",

|

||||

"downloads": 28,

|

||||

"views": 810,

|

||||

"upvotes": 4,

|

||||

"saves": 7,

|

||||

"comments": 0,

|

||||

"created_at": "2026-01-20",

|

||||

"updated_at": "2026-01-20",

|

||||

"url": "https://openwebui.com/posts/folder_memory_auto_evolving_project_context_4a9875b2"

|

||||

},

|

||||

{

|

||||

"title": "思维导图",

|

||||

"slug": "智能生成交互式思维导图帮助用户可视化知识_8d4b097b",

|

||||

@@ -178,15 +196,31 @@

|

||||

"version": "0.9.1",

|

||||

"author": "Fu-Jie",

|

||||

"description": "智能分析文本内容,生成交互式思维导图,帮助用户结构化和可视化知识。",

|

||||

"downloads": 22,

|

||||

"views": 392,

|

||||

"upvotes": 2,

|

||||

"downloads": 27,

|

||||

"views": 463,

|

||||

"upvotes": 4,

|

||||

"saves": 1,

|

||||

"comments": 0,

|

||||

"created_at": "2025-12-31",

|

||||

"updated_at": "2026-01-17",

|

||||

"url": "https://openwebui.com/posts/智能生成交互式思维导图帮助用户可视化知识_8d4b097b"

|

||||

},

|

||||

{

|

||||

"title": "异步上下文压缩",

|

||||

"slug": "异步上下文压缩_5c0617cb",

|

||||

"type": "action",

|

||||

"version": "1.2.2",

|

||||

"author": "Fu-Jie",

|

||||

"description": "通过智能摘要和消息压缩,降低长对话的 token 消耗,同时保持对话连贯性。",

|

||||

"downloads": 22,

|

||||

"views": 511,

|

||||

"upvotes": 5,

|

||||

"saves": 2,

|

||||

"comments": 0,

|

||||

"created_at": "2025-11-08",

|

||||

"updated_at": "2026-01-21",

|

||||

"url": "https://openwebui.com/posts/异步上下文压缩_5c0617cb"

|

||||

},

|

||||

{

|

||||

"title": "闪记卡 (Flash Card)",

|

||||

"slug": "闪记卡生成插件_4a31eac3",

|

||||

@@ -194,31 +228,15 @@

|

||||

"version": "0.2.4",

|

||||

"author": "Fu-Jie",

|

||||

"description": "快速将文本提炼为精美的学习记忆卡片,支持核心要点提取与分类。",

|

||||

"downloads": 16,

|

||||

"views": 430,

|

||||

"upvotes": 4,

|

||||

"downloads": 19,

|

||||

"views": 524,

|

||||

"upvotes": 6,

|

||||

"saves": 1,

|

||||

"comments": 0,

|

||||

"created_at": "2025-12-30",

|

||||

"updated_at": "2026-01-17",

|

||||

"url": "https://openwebui.com/posts/闪记卡生成插件_4a31eac3"

|

||||

},

|

||||

{

|

||||

"title": "异步上下文压缩",

|

||||

"slug": "异步上下文压缩_5c0617cb",

|

||||

"type": "action",

|

||||

"version": "1.1.3",

|

||||

"author": "Fu-Jie",

|

||||

"description": "通过智能摘要和消息压缩,降低长对话的 token 消耗,同时保持对话连贯性。",

|

||||

"downloads": 14,

|

||||

"views": 340,

|

||||

"upvotes": 4,

|

||||

"saves": 1,

|

||||

"comments": 0,

|

||||

"created_at": "2025-11-08",

|

||||

"updated_at": "2026-01-17",

|

||||

"url": "https://openwebui.com/posts/异步上下文压缩_5c0617cb"

|

||||

},

|

||||

{

|

||||

"title": "精读",

|

||||

"slug": "精读_99830b0f",

|

||||

@@ -226,15 +244,47 @@

|

||||

"version": "1.0.0",

|

||||

"author": "Fu-Jie",

|

||||

"description": "全方位的思维透镜 —— 从背景全景到逻辑脉络,从深度洞察到行动路径。",

|

||||

"downloads": 6,

|

||||

"views": 254,

|

||||

"upvotes": 2,

|

||||

"downloads": 9,

|

||||

"views": 316,

|

||||

"upvotes": 3,

|

||||

"saves": 1,

|

||||

"comments": 0,

|

||||

"created_at": "2026-01-08",

|

||||

"updated_at": "2026-01-08",

|

||||

"url": "https://openwebui.com/posts/精读_99830b0f"

|

||||

},

|

||||

{

|

||||

"title": "GitHub Copilot Official SDK Pipe",

|

||||

"slug": "github_copilot_official_sdk_pipe_ce96f7b4",

|

||||

"type": "pipe",

|

||||

"version": "0.2.3",

|

||||

"author": "Fu-Jie",

|

||||

"description": "Integrate GitHub Copilot SDK. Supports dynamic models, multi-turn conversation, streaming, multimodal input, infinite sessions, and frontend debug logging.",

|

||||

"downloads": 7,

|

||||

"views": 295,

|

||||

"upvotes": 2,

|

||||

"saves": 2,

|

||||

"comments": 1,

|

||||

"created_at": "2026-01-26",

|

||||

"updated_at": "2026-01-26",

|

||||

"url": "https://openwebui.com/posts/github_copilot_official_sdk_pipe_ce96f7b4"

|

||||

},

|

||||

{

|

||||

"title": "🚀 Open WebUI Prompt Plus: AI-Powered Prompt Manager",

|

||||

"slug": "open_webui_prompt_plus_ai_powered_prompt_manager_s_15fa060e",

|

||||

"type": "unknown",

|

||||

"version": "",

|

||||

"author": "",

|

||||

"description": "",

|

||||

"downloads": 0,

|

||||

"views": 653,

|

||||

"upvotes": 6,

|

||||

"saves": 8,

|

||||

"comments": 3,

|

||||

"created_at": "2026-01-25",

|

||||

"updated_at": "2026-01-25",

|

||||

"url": "https://openwebui.com/posts/open_webui_prompt_plus_ai_powered_prompt_manager_s_15fa060e"

|

||||

},

|

||||

{

|

||||

"title": "Review of Claude Haiku 4.5",

|

||||

"slug": "review_of_claude_haiku_45_41b0db39",

|

||||

@@ -243,8 +293,8 @@

|

||||

"author": "",

|

||||

"description": "",

|

||||

"downloads": 0,

|

||||

"views": 46,

|

||||

"upvotes": 0,

|

||||

"views": 99,

|

||||

"upvotes": 1,

|

||||

"saves": 0,

|

||||

"comments": 0,

|

||||

"created_at": "2026-01-14",

|

||||

@@ -259,9 +309,9 @@

|

||||

"author": "",

|

||||

"description": "",

|

||||

"downloads": 0,

|

||||

"views": 1154,

|

||||

"upvotes": 11,

|

||||

"saves": 7,

|

||||

"views": 1291,

|

||||

"upvotes": 12,

|

||||

"saves": 8,

|

||||

"comments": 2,

|

||||

"created_at": "2026-01-10",

|

||||

"updated_at": "2026-01-10",

|

||||

@@ -273,11 +323,11 @@

|

||||

"name": "Fu-Jie",

|

||||

"profile_url": "https://openwebui.com/u/Fu-Jie",

|

||||

"profile_image": "https://community.s3.openwebui.com/uploads/users/b15d1348-4347-42b4-b815-e053342d6cb0/profile_d9510745-4bd4-4f8f-a997-4a21847d9300.webp",

|

||||

"followers": 119,

|

||||

"following": 2,

|

||||

"total_points": 113,

|

||||

"post_points": 97,

|

||||

"comment_points": 16,

|

||||

"contributions": 25

|

||||

"followers": 165,

|

||||

"following": 3,

|

||||

"total_points": 157,

|

||||

"post_points": 139,

|

||||

"comment_points": 18,

|

||||

"contributions": 34

|

||||

}

|

||||

}

|

||||

@@ -1,40 +1,45 @@

|

||||

# 📊 OpenWebUI Community Stats Report

|

||||

|

||||

> 📅 Updated: 2026-01-18 00:08

|

||||

> 📅 Updated: 2026-01-28 01:12

|

||||

|

||||

## 📈 Overview

|

||||

|

||||

| Metric | Value |

|

||||

|------|------|

|

||||

| 📝 Total Posts | 16 |

|

||||

| ⬇️ Total Downloads | 1659 |

|

||||

| 👁️ Total Views | 19990 |

|

||||

| 👍 Total Upvotes | 99 |

|

||||

| 💾 Total Saves | 126 |

|

||||

| 💬 Total Comments | 23 |

|

||||

| 📝 Total Posts | 19 |

|

||||

| ⬇️ Total Downloads | 2501 |

|

||||

| 👁️ Total Views | 29277 |

|

||||

| 👍 Total Upvotes | 141 |

|

||||

| 💾 Total Saves | 197 |

|

||||

| 💬 Total Comments | 38 |

|

||||

|

||||

## 📂 By Type

|

||||

|

||||

- **pipe**: 1

|

||||

- **action**: 14

|

||||

- **unknown**: 2

|

||||

- **unknown**: 3

|

||||

- **filter**: 1

|

||||

|

||||

## 📋 Posts List

|

||||

|

||||

| Rank | Title | Type | Version | Downloads | Views | Upvotes | Saves | Updated |

|

||||

|:---:|------|:---:|:---:|:---:|:---:|:---:|:---:|:---:|

|

||||

| 1 | [Smart Mind Map](https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a) | action | 0.9.1 | 507 | 4641 | 14 | 28 | 2026-01-17 |

|

||||

| 2 | [📊 Smart Infographic (AntV)](https://openwebui.com/posts/smart_infographic_ad6f0c7f) | action | 1.4.9 | 228 | 2285 | 11 | 16 | 2026-01-17 |

|

||||

| 3 | [Export to Excel](https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d) | action | 0.3.7 | 202 | 751 | 3 | 5 | 2026-01-07 |

|

||||

| 4 | [Async Context Compression](https://openwebui.com/posts/async_context_compression_b1655bc8) | action | 1.1.3 | 174 | 1906 | 7 | 18 | 2026-01-17 |

|

||||

| 5 | [Export to Word (Enhanced)](https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315) | action | 0.4.3 | 134 | 1255 | 6 | 15 | 2026-01-17 |

|

||||

| 6 | [Flash Card](https://openwebui.com/posts/flash_card_65a2ea8f) | action | 0.2.4 | 132 | 2269 | 8 | 10 | 2026-01-17 |

|

||||

| 7 | [Markdown Normalizer](https://openwebui.com/posts/markdown_normalizer_baaa8732) | action | 1.2.2 | 63 | 1746 | 9 | 15 | 2026-01-17 |

|

||||

| 8 | [导出为 Word (增强版)](https://openwebui.com/posts/导出为_word_支持公式流程图表格和代码块_8a6306c0) | action | 0.4.3 | 62 | 1262 | 10 | 3 | 2026-01-17 |

|

||||

| 9 | [Deep Dive](https://openwebui.com/posts/deep_dive_c0b846e4) | action | 1.0.0 | 58 | 605 | 3 | 5 | 2026-01-08 |

|

||||

| 10 | [📊 智能信息图 (AntV Infographic)](https://openwebui.com/posts/智能信息图_e04a48ff) | action | 1.4.9 | 41 | 654 | 5 | 0 | 2026-01-17 |

|

||||

| 11 | [思维导图](https://openwebui.com/posts/智能生成交互式思维导图帮助用户可视化知识_8d4b097b) | action | 0.9.1 | 22 | 392 | 2 | 1 | 2026-01-17 |

|

||||

| 12 | [闪记卡 (Flash Card)](https://openwebui.com/posts/闪记卡生成插件_4a31eac3) | action | 0.2.4 | 16 | 430 | 4 | 1 | 2026-01-17 |

|

||||

| 13 | [异步上下文压缩](https://openwebui.com/posts/异步上下文压缩_5c0617cb) | action | 1.1.3 | 14 | 340 | 4 | 1 | 2026-01-17 |

|

||||

| 14 | [精读](https://openwebui.com/posts/精读_99830b0f) | action | 1.0.0 | 6 | 254 | 2 | 1 | 2026-01-08 |

|

||||

| 15 | [Review of Claude Haiku 4.5](https://openwebui.com/posts/review_of_claude_haiku_45_41b0db39) | unknown | | 0 | 46 | 0 | 0 | 2026-01-14 |

|

||||

| 16 | [ 🛠️ Debug Open WebUI Plugins in Your Browser](https://openwebui.com/posts/debug_open_webui_plugins_in_your_browser_81bf7960) | unknown | | 0 | 1154 | 11 | 7 | 2026-01-10 |

|

||||

| 1 | [Smart Mind Map](https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a) | action | 0.9.1 | 652 | 5828 | 17 | 38 | 2026-01-17 |

|

||||

| 2 | [Smart Infographic](https://openwebui.com/posts/smart_infographic_ad6f0c7f) | action | 1.4.9 | 434 | 3928 | 18 | 28 | 2026-01-25 |

|

||||

| 3 | [Export to Excel](https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d) | action | 0.3.7 | 263 | 1082 | 4 | 6 | 2026-01-07 |

|

||||

| 4 | [Export to Word (Enhanced)](https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315) | action | 0.4.3 | 238 | 1916 | 8 | 21 | 2026-01-17 |

|

||||

| 5 | [Async Context Compression](https://openwebui.com/posts/async_context_compression_b1655bc8) | action | 1.2.2 | 236 | 2557 | 9 | 27 | 2026-01-21 |

|

||||

| 6 | [Flash Card](https://openwebui.com/posts/flash_card_65a2ea8f) | action | 0.2.4 | 176 | 2769 | 11 | 13 | 2026-01-17 |

|

||||

| 7 | [Markdown Normalizer](https://openwebui.com/posts/markdown_normalizer_baaa8732) | action | 1.2.4 | 160 | 2884 | 10 | 22 | 2026-01-19 |

|

||||

| 8 | [Deep Dive](https://openwebui.com/posts/deep_dive_c0b846e4) | action | 1.0.0 | 94 | 863 | 4 | 8 | 2026-01-08 |

|

||||

| 9 | [导出为 Word (增强版)](https://openwebui.com/posts/导出为_word_支持公式流程图表格和代码块_8a6306c0) | action | 0.4.3 | 89 | 1689 | 11 | 4 | 2026-01-17 |

|

||||

| 10 | [📊 智能信息图 (AntV Infographic)](https://openwebui.com/posts/智能信息图_e04a48ff) | action | 1.4.9 | 47 | 799 | 6 | 0 | 2026-01-17 |

|

||||

| 11 | [📂 Folder Memory – Auto-Evolving Project Context](https://openwebui.com/posts/folder_memory_auto_evolving_project_context_4a9875b2) | filter | 0.1.0 | 28 | 810 | 4 | 7 | 2026-01-20 |

|

||||

| 12 | [思维导图](https://openwebui.com/posts/智能生成交互式思维导图帮助用户可视化知识_8d4b097b) | action | 0.9.1 | 27 | 463 | 4 | 1 | 2026-01-17 |

|

||||

| 13 | [异步上下文压缩](https://openwebui.com/posts/异步上下文压缩_5c0617cb) | action | 1.2.2 | 22 | 511 | 5 | 2 | 2026-01-21 |

|

||||

| 14 | [闪记卡 (Flash Card)](https://openwebui.com/posts/闪记卡生成插件_4a31eac3) | action | 0.2.4 | 19 | 524 | 6 | 1 | 2026-01-17 |

|

||||

| 15 | [精读](https://openwebui.com/posts/精读_99830b0f) | action | 1.0.0 | 9 | 316 | 3 | 1 | 2026-01-08 |

|

||||

| 16 | [GitHub Copilot Official SDK Pipe](https://openwebui.com/posts/github_copilot_official_sdk_pipe_ce96f7b4) | pipe | 0.2.3 | 7 | 295 | 2 | 2 | 2026-01-26 |

|

||||

| 17 | [🚀 Open WebUI Prompt Plus: AI-Powered Prompt Manager](https://openwebui.com/posts/open_webui_prompt_plus_ai_powered_prompt_manager_s_15fa060e) | unknown | | 0 | 653 | 6 | 8 | 2026-01-25 |

|

||||

| 18 | [Review of Claude Haiku 4.5](https://openwebui.com/posts/review_of_claude_haiku_45_41b0db39) | unknown | | 0 | 99 | 1 | 0 | 2026-01-14 |

|

||||

| 19 | [ 🛠️ Debug Open WebUI Plugins in Your Browser](https://openwebui.com/posts/debug_open_webui_plugins_in_your_browser_81bf7960) | unknown | | 0 | 1291 | 12 | 8 | 2026-01-10 |

|

||||

|

||||

@@ -1,40 +1,45 @@

|

||||

# 📊 OpenWebUI 社区统计报告

|

||||

|

||||

> 📅 更新时间: 2026-01-18 00:08

|

||||

> 📅 更新时间: 2026-01-28 01:12

|

||||

|

||||

## 📈 总览

|

||||

|

||||

| 指标 | 数值 |

|

||||

|------|------|

|

||||

| 📝 发布数量 | 16 |

|

||||

| ⬇️ 总下载量 | 1659 |

|

||||

| 👁️ 总浏览量 | 19990 |

|

||||

| 👍 总点赞数 | 99 |

|

||||

| 💾 总收藏数 | 126 |

|

||||

| 💬 总评论数 | 23 |

|

||||

| 📝 发布数量 | 19 |

|

||||

| ⬇️ 总下载量 | 2501 |

|

||||

| 👁️ 总浏览量 | 29277 |

|

||||

| 👍 总点赞数 | 141 |

|

||||

| 💾 总收藏数 | 197 |

|

||||

| 💬 总评论数 | 38 |

|

||||

|

||||

## 📂 按类型分类

|

||||

|

||||

- **pipe**: 1

|

||||

- **action**: 14

|

||||

- **unknown**: 2

|

||||

- **unknown**: 3

|

||||

- **filter**: 1

|

||||

|

||||

## 📋 发布列表

|

||||

|

||||

| 排名 | 标题 | 类型 | 版本 | 下载 | 浏览 | 点赞 | 收藏 | 更新日期 |

|

||||

|:---:|------|:---:|:---:|:---:|:---:|:---:|:---:|:---:|

|

||||

| 1 | [Smart Mind Map](https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a) | action | 0.9.1 | 507 | 4641 | 14 | 28 | 2026-01-17 |

|

||||

| 2 | [📊 Smart Infographic (AntV)](https://openwebui.com/posts/smart_infographic_ad6f0c7f) | action | 1.4.9 | 228 | 2285 | 11 | 16 | 2026-01-17 |

|

||||

| 3 | [Export to Excel](https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d) | action | 0.3.7 | 202 | 751 | 3 | 5 | 2026-01-07 |

|

||||

| 4 | [Async Context Compression](https://openwebui.com/posts/async_context_compression_b1655bc8) | action | 1.1.3 | 174 | 1906 | 7 | 18 | 2026-01-17 |

|

||||

| 5 | [Export to Word (Enhanced)](https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315) | action | 0.4.3 | 134 | 1255 | 6 | 15 | 2026-01-17 |

|

||||

| 6 | [Flash Card](https://openwebui.com/posts/flash_card_65a2ea8f) | action | 0.2.4 | 132 | 2269 | 8 | 10 | 2026-01-17 |

|

||||

| 7 | [Markdown Normalizer](https://openwebui.com/posts/markdown_normalizer_baaa8732) | action | 1.2.2 | 63 | 1746 | 9 | 15 | 2026-01-17 |

|

||||

| 8 | [导出为 Word (增强版)](https://openwebui.com/posts/导出为_word_支持公式流程图表格和代码块_8a6306c0) | action | 0.4.3 | 62 | 1262 | 10 | 3 | 2026-01-17 |

|

||||

| 9 | [Deep Dive](https://openwebui.com/posts/deep_dive_c0b846e4) | action | 1.0.0 | 58 | 605 | 3 | 5 | 2026-01-08 |

|

||||

| 10 | [📊 智能信息图 (AntV Infographic)](https://openwebui.com/posts/智能信息图_e04a48ff) | action | 1.4.9 | 41 | 654 | 5 | 0 | 2026-01-17 |

|

||||

| 11 | [思维导图](https://openwebui.com/posts/智能生成交互式思维导图帮助用户可视化知识_8d4b097b) | action | 0.9.1 | 22 | 392 | 2 | 1 | 2026-01-17 |

|

||||

| 12 | [闪记卡 (Flash Card)](https://openwebui.com/posts/闪记卡生成插件_4a31eac3) | action | 0.2.4 | 16 | 430 | 4 | 1 | 2026-01-17 |

|

||||

| 13 | [异步上下文压缩](https://openwebui.com/posts/异步上下文压缩_5c0617cb) | action | 1.1.3 | 14 | 340 | 4 | 1 | 2026-01-17 |

|

||||

| 14 | [精读](https://openwebui.com/posts/精读_99830b0f) | action | 1.0.0 | 6 | 254 | 2 | 1 | 2026-01-08 |

|

||||

| 15 | [Review of Claude Haiku 4.5](https://openwebui.com/posts/review_of_claude_haiku_45_41b0db39) | unknown | | 0 | 46 | 0 | 0 | 2026-01-14 |

|

||||

| 16 | [ 🛠️ Debug Open WebUI Plugins in Your Browser](https://openwebui.com/posts/debug_open_webui_plugins_in_your_browser_81bf7960) | unknown | | 0 | 1154 | 11 | 7 | 2026-01-10 |

|

||||

| 1 | [Smart Mind Map](https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a) | action | 0.9.1 | 652 | 5828 | 17 | 38 | 2026-01-17 |

|

||||

| 2 | [Smart Infographic](https://openwebui.com/posts/smart_infographic_ad6f0c7f) | action | 1.4.9 | 434 | 3928 | 18 | 28 | 2026-01-25 |

|

||||

| 3 | [Export to Excel](https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d) | action | 0.3.7 | 263 | 1082 | 4 | 6 | 2026-01-07 |

|

||||

| 4 | [Export to Word (Enhanced)](https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315) | action | 0.4.3 | 238 | 1916 | 8 | 21 | 2026-01-17 |

|

||||

| 5 | [Async Context Compression](https://openwebui.com/posts/async_context_compression_b1655bc8) | action | 1.2.2 | 236 | 2557 | 9 | 27 | 2026-01-21 |

|

||||

| 6 | [Flash Card](https://openwebui.com/posts/flash_card_65a2ea8f) | action | 0.2.4 | 176 | 2769 | 11 | 13 | 2026-01-17 |

|

||||

| 7 | [Markdown Normalizer](https://openwebui.com/posts/markdown_normalizer_baaa8732) | action | 1.2.4 | 160 | 2884 | 10 | 22 | 2026-01-19 |

|

||||

| 8 | [Deep Dive](https://openwebui.com/posts/deep_dive_c0b846e4) | action | 1.0.0 | 94 | 863 | 4 | 8 | 2026-01-08 |

|

||||

| 9 | [导出为 Word (增强版)](https://openwebui.com/posts/导出为_word_支持公式流程图表格和代码块_8a6306c0) | action | 0.4.3 | 89 | 1689 | 11 | 4 | 2026-01-17 |

|

||||

| 10 | [📊 智能信息图 (AntV Infographic)](https://openwebui.com/posts/智能信息图_e04a48ff) | action | 1.4.9 | 47 | 799 | 6 | 0 | 2026-01-17 |

|

||||

| 11 | [📂 Folder Memory – Auto-Evolving Project Context](https://openwebui.com/posts/folder_memory_auto_evolving_project_context_4a9875b2) | filter | 0.1.0 | 28 | 810 | 4 | 7 | 2026-01-20 |

|

||||

| 12 | [思维导图](https://openwebui.com/posts/智能生成交互式思维导图帮助用户可视化知识_8d4b097b) | action | 0.9.1 | 27 | 463 | 4 | 1 | 2026-01-17 |

|

||||

| 13 | [异步上下文压缩](https://openwebui.com/posts/异步上下文压缩_5c0617cb) | action | 1.2.2 | 22 | 511 | 5 | 2 | 2026-01-21 |

|

||||

| 14 | [闪记卡 (Flash Card)](https://openwebui.com/posts/闪记卡生成插件_4a31eac3) | action | 0.2.4 | 19 | 524 | 6 | 1 | 2026-01-17 |

|

||||

| 15 | [精读](https://openwebui.com/posts/精读_99830b0f) | action | 1.0.0 | 9 | 316 | 3 | 1 | 2026-01-08 |

|

||||

| 16 | [GitHub Copilot Official SDK Pipe](https://openwebui.com/posts/github_copilot_official_sdk_pipe_ce96f7b4) | pipe | 0.2.3 | 7 | 295 | 2 | 2 | 2026-01-26 |

|

||||

| 17 | [🚀 Open WebUI Prompt Plus: AI-Powered Prompt Manager](https://openwebui.com/posts/open_webui_prompt_plus_ai_powered_prompt_manager_s_15fa060e) | unknown | | 0 | 653 | 6 | 8 | 2026-01-25 |

|

||||

| 18 | [Review of Claude Haiku 4.5](https://openwebui.com/posts/review_of_claude_haiku_45_41b0db39) | unknown | | 0 | 99 | 1 | 0 | 2026-01-14 |

|

||||

| 19 | [ 🛠️ Debug Open WebUI Plugins in Your Browser](https://openwebui.com/posts/debug_open_webui_plugins_in_your_browser_81bf7960) | unknown | | 0 | 1291 | 12 | 8 | 2026-01-10 |

|

||||

|

||||

@@ -349,6 +349,53 @@ await __event_emitter__(

|

||||

)

|

||||

```

|

||||

|

||||

#### Advanced Use Case: Retrieving Frontend Data

|

||||

|

||||

One of the most powerful capabilities of the `execute` event type is the ability to fetch data from the browser environment (JavaScript) and return it to your Python backend. This allows plugins to access information like:

|

||||

|

||||

- `localStorage` items (user preferences, tokens)

|

||||

- `navigator` properties (language, geolocation, platform)

|

||||

- `document` properties (cookies, URL parameters)

|

||||

|

||||

**How it works:**

|

||||

The JavaScript code you provide in the `"code"` field is executed in the browser. If your JS code includes a `return` statement, that value is sent back to Python as the result of `await __event_call__`.

|

||||

|

||||

**Example: Getting the User's UI Language**

|

||||

|

||||

```python

|

||||

try:

|

||||

# Execute JS on the frontend to get language settings

|

||||

response = await __event_call__(

|

||||

{

|

||||

"type": "execute",

|

||||

"data": {

|

||||

# This JS code runs in the browser.

|

||||

# The 'return' value is sent back to Python.

|

||||

"code": """

|

||||

return (

|

||||

localStorage.getItem('locale') ||

|

||||

localStorage.getItem('language') ||

|

||||

navigator.language ||

|

||||

'en-US'

|

||||

);

|

||||

""",

|

||||

},

|

||||

}

|

||||

)

|

||||

|

||||

# 'response' will contain the string returned by JS (e.g., "en-US", "zh-CN")

|

||||

# Note: Wrap in try-except to handle potential timeouts or JS errors

|

||||

logger.info(f"Frontend Language: {response}")

|

||||

|

||||

except Exception as e:

|

||||

logger.error(f"Failed to get frontend data: {e}")

|

||||

```

|

||||

|

||||

**Key capabilities unlocked:**

|

||||

- **Context Awareness:** Adapt responses based on user time zone or language.

|

||||

- **Client-Side Storage:** Use `localStorage` to persist simple plugin settings without a database.

|

||||

- **Hardware Access:** Request geolocation or clipboard access (requires user permission).

|

||||

|

||||

---

|

||||

|

||||

## 🏗️ When & Where to Use Events

|

||||

@@ -421,4 +468,4 @@ Refer to this document for common event types and structures, and explore Open W

|

||||

|

||||

---

|

||||

|

||||

**Happy event-driven coding in Open WebUI! 🚀**

|

||||

**Happy event-driven coding in Open WebUI! 🚀**

|

||||

|

||||

@@ -1,7 +1,7 @@

|

||||

# Async Context Compression

|

||||

|

||||

<span class="category-badge filter">Filter</span>

|

||||

<span class="version-badge">v1.1.3</span>

|

||||

<span class="version-badge">v1.2.2</span>

|

||||

|

||||

Reduces token consumption in long conversations through intelligent summarization while maintaining conversational coherence.

|

||||

|

||||

@@ -34,6 +34,12 @@ This is especially useful for:

|

||||

- :material-check-all: **Open WebUI v0.7.x Compatibility**: Dynamic DB session handling

|

||||

- :material-account-convert: **Improved Compatibility**: Summary role changed to `assistant`

|

||||

- :material-shield-check: **Enhanced Stability**: Resolved race conditions in state management

|

||||

- :material-ruler: **Preflight Context Check**: Validates context fit before sending

|

||||

- :material-format-align-justify: **Structure-Aware Trimming**: Preserves document structure

|

||||

- :material-content-cut: **Native Tool Output Trimming**: Trims verbose tool outputs (Note: Non-native tool outputs are not fully injected into context)

|

||||

- :material-chart-bar: **Detailed Token Logging**: Granular token breakdown

|

||||

- :material-account-search: **Smart Model Matching**: Inherit config from base models

|

||||

- :material-image-off: **Multimodal Support**: Images are preserved but tokens are **NOT** calculated

|

||||

|

||||

---

|

||||

|

||||

@@ -64,10 +70,14 @@ graph TD

|

||||

|

||||

| Option | Type | Default | Description |

|

||||

|--------|------|---------|-------------|

|

||||

| `token_threshold` | integer | `4000` | Trigger compression above this token count |

|

||||

| `preserve_recent` | integer | `5` | Number of recent messages to keep uncompressed |

|

||||

| `summary_model` | string | `"auto"` | Model to use for summarization |

|

||||

| `compression_ratio` | float | `0.3` | Target compression ratio |

|

||||

| `compression_threshold_tokens` | integer | `64000` | Trigger compression above this token count |

|

||||

| `max_context_tokens` | integer | `128000` | Hard limit for context |

|

||||

| `keep_first` | integer | `1` | Always keep the first N messages |

|

||||

| `keep_last` | integer | `6` | Always keep the last N messages |

|

||||

| `summary_model` | string | `None` | Model to use for summarization |

|

||||

| `summary_model_max_context` | integer | `0` | Max context tokens for summary model |

|

||||

| `max_summary_tokens` | integer | `16384` | Maximum tokens for the summary |

|

||||

| `enable_tool_output_trimming` | boolean | `false` | Enable trimming of large tool outputs |

|

||||

|

||||

---

|

||||

|

||||

|

||||

@@ -1,7 +1,7 @@

|

||||

# Async Context Compression(异步上下文压缩)

|

||||

|

||||

<span class="category-badge filter">Filter</span>

|

||||

<span class="version-badge">v1.1.3</span>

|

||||

<span class="version-badge">v1.2.2</span>

|

||||

|

||||

通过智能摘要减少长对话的 token 消耗,同时保持对话连贯。

|

||||

|

||||

@@ -34,6 +34,12 @@ Async Context Compression 过滤器通过以下方式帮助管理长对话的 to

|

||||

- :material-check-all: **Open WebUI v0.7.x 兼容性**:动态数据库会话处理

|

||||

- :material-account-convert: **兼容性提升**:摘要角色改为 `assistant`

|

||||

- :material-shield-check: **稳定性增强**:解决状态管理竞态条件

|

||||

- :material-ruler: **预检上下文检查**:发送前验证上下文是否超限

|

||||

- :material-format-align-justify: **结构感知裁剪**:保留文档结构的智能裁剪

|

||||

- :material-content-cut: **原生工具输出裁剪**:自动裁剪冗长的工具输出(注意:非原生工具调用输出不会完整注入上下文)

|

||||

- :material-chart-bar: **详细 Token 日志**:提供细粒度的 Token 统计

|

||||

- :material-account-search: **智能模型匹配**:自定义模型自动继承基础模型配置

|

||||

- :material-image-off: **多模态支持**:图片内容保留但 Token **不参与计算**

|

||||

|

||||

---

|

||||

|

||||

@@ -64,10 +70,14 @@ graph TD

|

||||

|

||||

| 选项 | 类型 | 默认值 | 说明 |

|

||||

|--------|------|---------|-------------|

|

||||

| `token_threshold` | integer | `4000` | 超过该 token 数触发压缩 |

|

||||

| `preserve_recent` | integer | `5` | 保留不压缩的最近消息数量 |

|

||||

| `summary_model` | string | `"auto"` | 用于摘要的模型 |

|

||||

| `compression_ratio` | float | `0.3` | 目标压缩比例 |

|

||||

| `compression_threshold_tokens` | integer | `64000` | 超过该 token 数触发压缩 |

|

||||

| `max_context_tokens` | integer | `128000` | 上下文硬性上限 |

|

||||

| `keep_first` | integer | `1` | 始终保留的前 N 条消息 |

|

||||

| `keep_last` | integer | `6` | 始终保留的后 N 条消息 |

|

||||

| `summary_model` | string | `None` | 用于摘要的模型 |

|

||||

| `summary_model_max_context` | integer | `0` | 摘要模型的最大上下文 Token 数 |

|

||||

| `max_summary_tokens` | integer | `16384` | 摘要的最大 token 数 |

|

||||

| `enable_tool_output_trimming` | boolean | `false` | 启用长工具输出裁剪 |

|

||||

|

||||

---

|

||||

|

||||

|

||||

57

docs/plugins/filters/folder-memory.md

Normal file

57

docs/plugins/filters/folder-memory.md

Normal file

@@ -0,0 +1,57 @@

|

||||

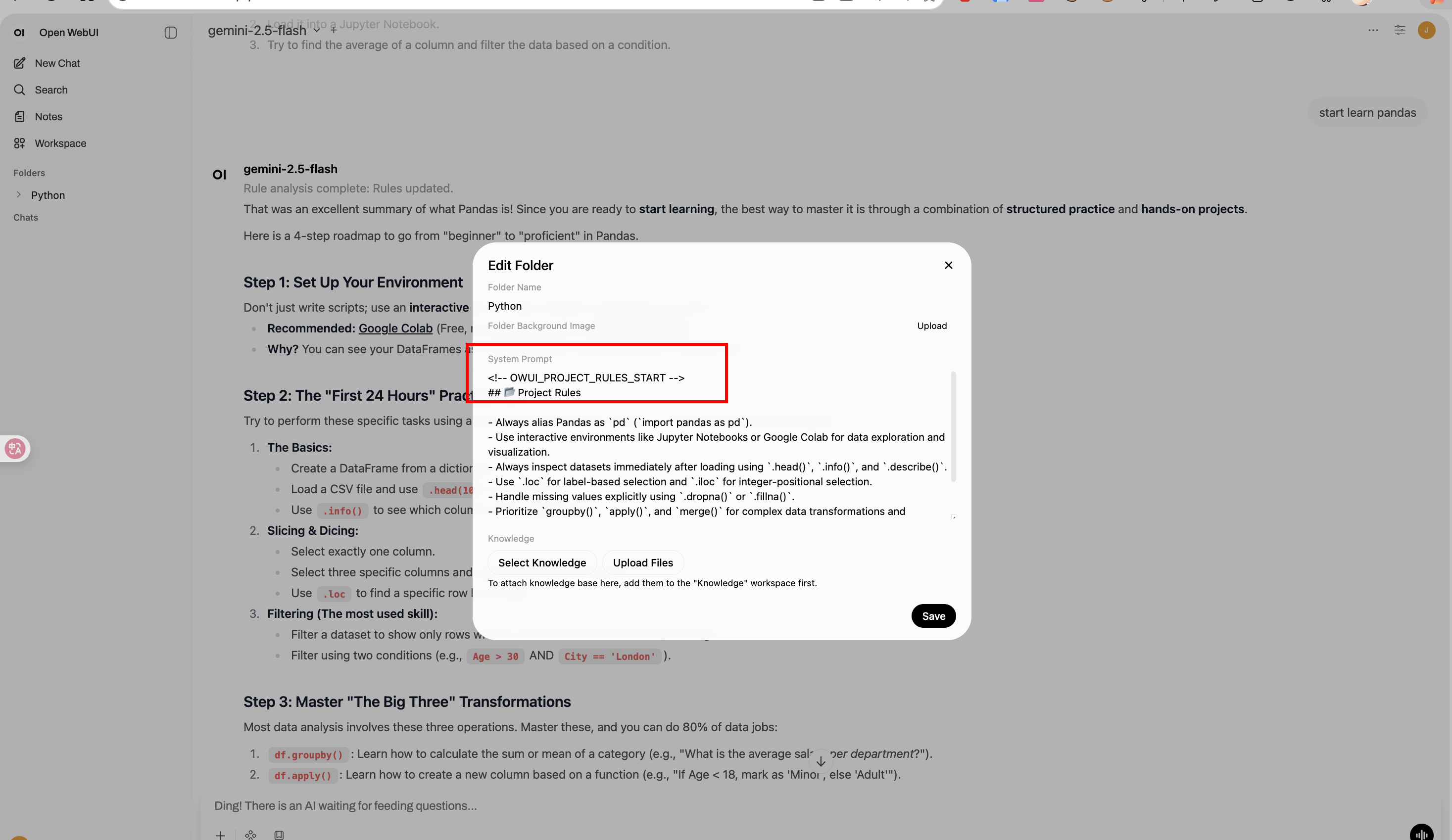

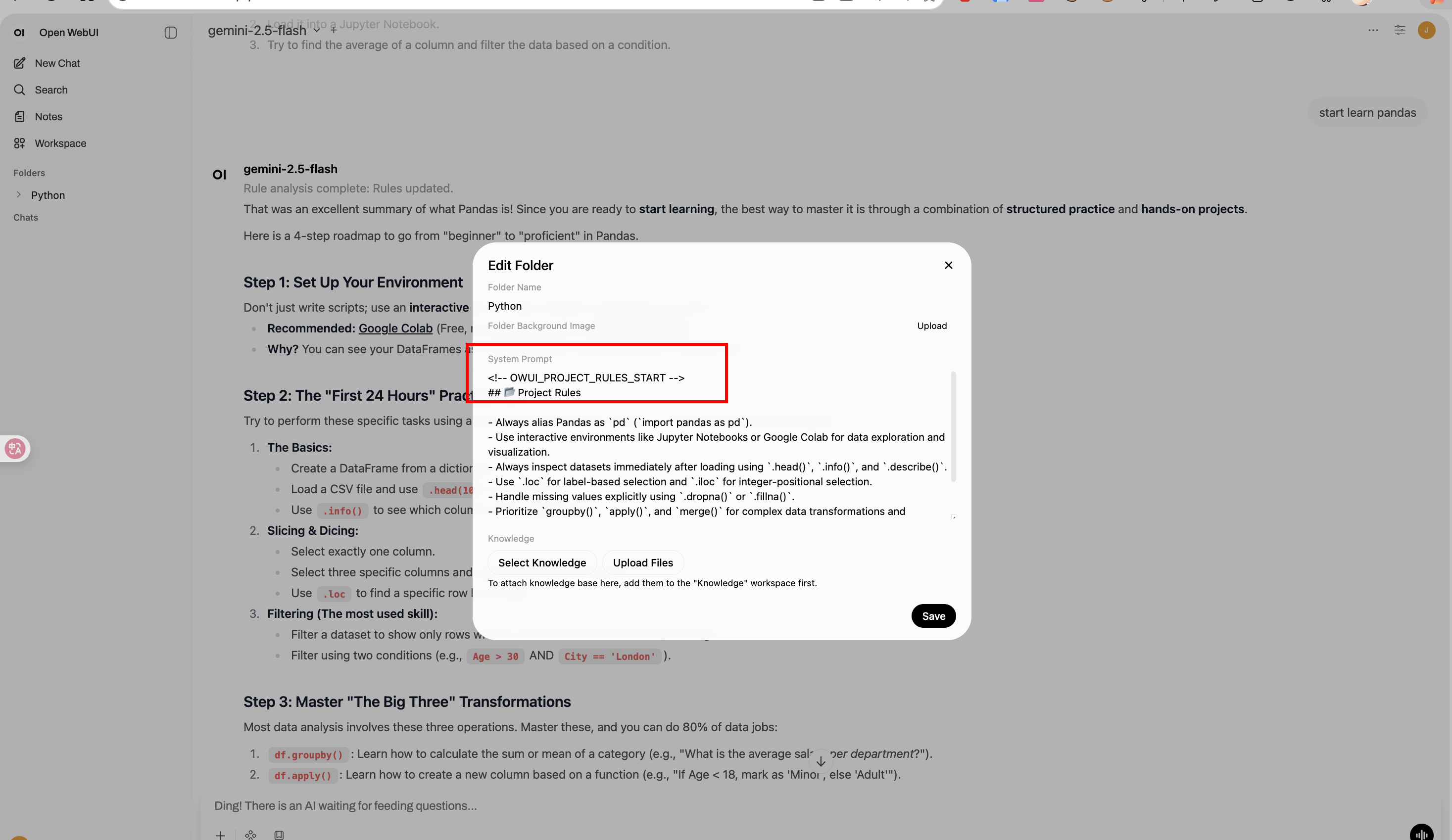

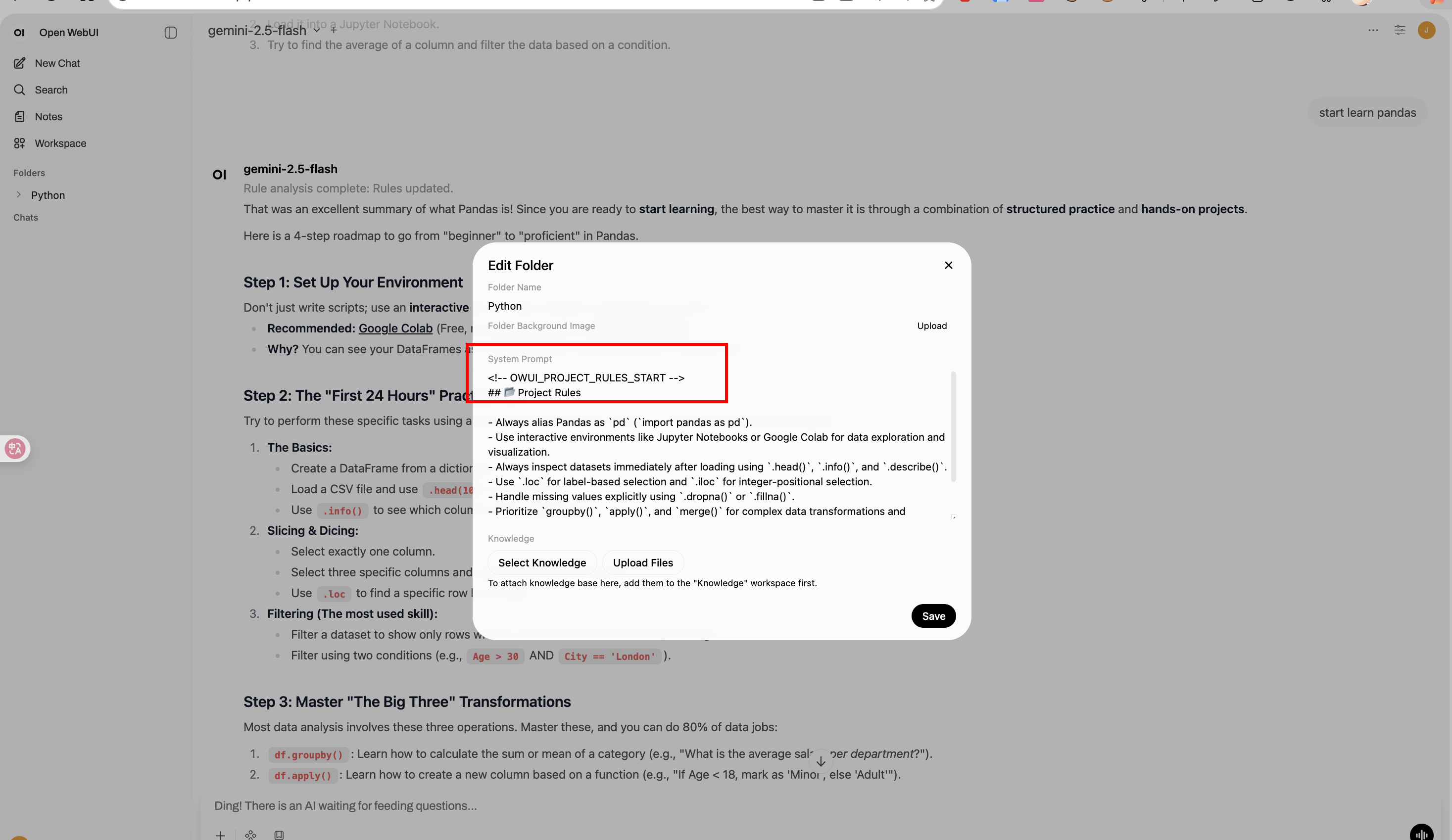

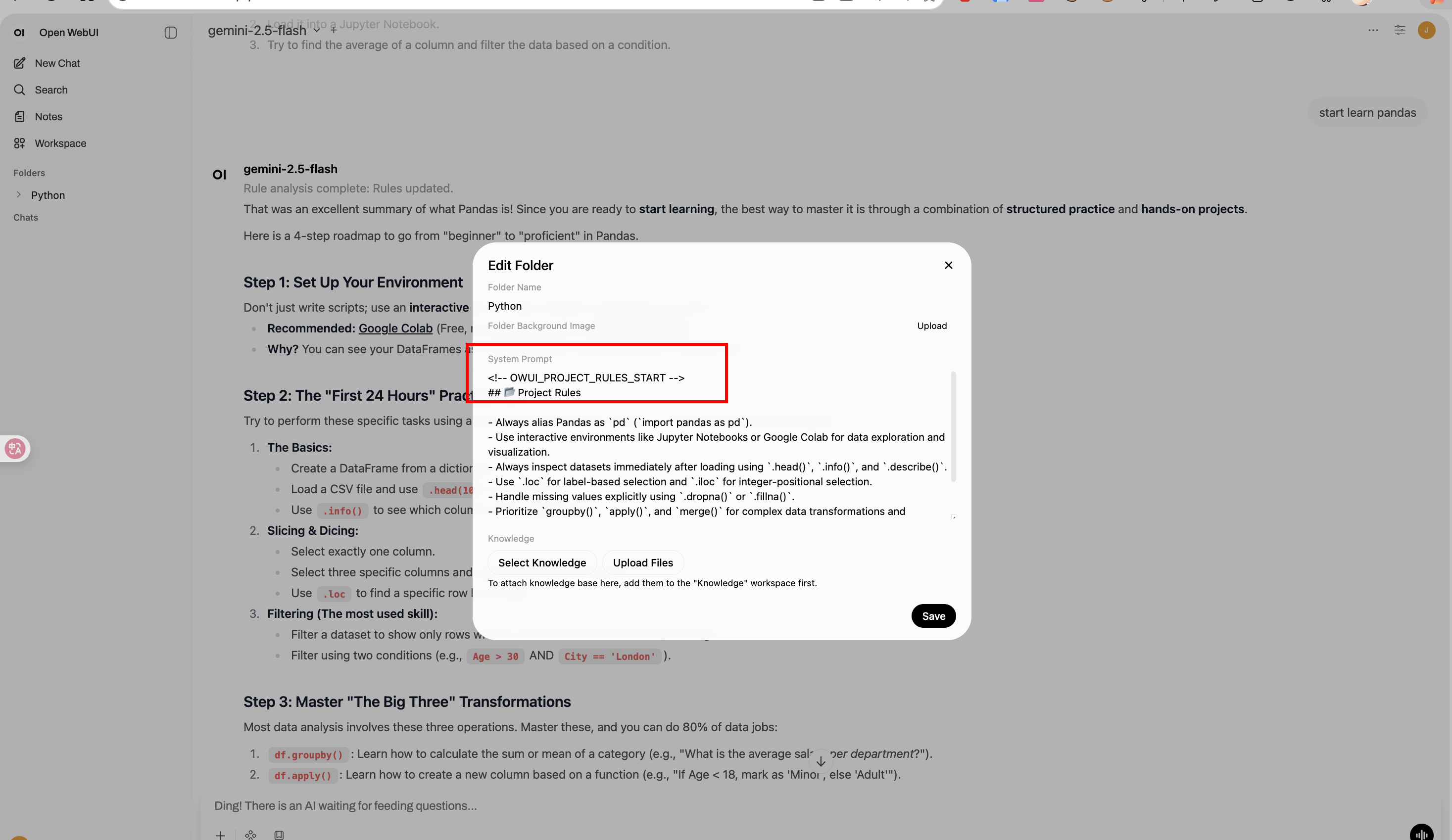

# Folder Memory

|

||||

|

||||

**Author:** [Fu-Jie](https://github.com/Fu-Jie/awesome-openwebui) | **Version:** 0.1.0 | **Project:** [Awesome OpenWebUI](https://github.com/Fu-Jie/awesome-openwebui) | **License:** MIT

|

||||

|

||||

---

|

||||

|

||||

### 📌 What's new in 0.1.0

|

||||

- **Initial Release**: Automated "Project Rules" management for OpenWebUI folders.

|

||||

- **Folder-Level Persistence**: Automatically updates folder system prompts with extracted rules.

|

||||

- **Optimized Performance**: Runs asynchronously and supports `PRIORITY` configuration for seamless integration with other filters.

|

||||

|

||||

---

|

||||

|

||||

**Folder Memory** is an intelligent context filter plugin for OpenWebUI. It automatically extracts consistent "Project Rules" from ongoing conversations within a folder and injects them back into the folder's system prompt.

|

||||

|

||||

This ensures that all future conversations within that folder share the same evolved context and rules, without manual updates.

|

||||

|

||||

## Features

|

||||

|

||||

- **Automatic Extraction**: Analyzes chat history every N messages to extract project rules.

|

||||

- **Non-destructive Injection**: Updates only the specific "Project Rules" block in the system prompt, preserving other instructions.

|

||||

- **Async Processing**: Runs in the background without blocking the user's chat experience.

|

||||

- **ORM Integration**: Directly updates folder data using OpenWebUI's internal models for reliability.

|

||||

|

||||

## Prerequisites

|

||||

|

||||

- **Conversations must occur inside a folder.** This plugin only triggers when a chat belongs to a folder (i.e., you need to create a folder in OpenWebUI and start a conversation within it).

|

||||

|

||||

## Installation

|

||||

|

||||

1. Copy `folder_memory.py` to your OpenWebUI `plugins/filters/` directory (or upload via Admin UI).

|

||||

2. Enable the filter in your **Settings** -> **Filters**.

|

||||

3. (Optional) Configure the triggering threshold (default: every 10 messages).

|

||||

|

||||

## Configuration (Valves)

|

||||

|

||||

| Valve | Default | Description |

|

||||

| :--- | :--- | :--- |

|

||||