Compare commits

44 Commits

v2026.01.1

...

v2026.01.2

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

813b019653 | ||

|

|

b0b1542939 | ||

|

|

15f19d8b8d | ||

|

|

82253b114c | ||

|

|

e0bfbf6dd4 | ||

|

|

4689e80e7a | ||

|

|

556e6c1c67 | ||

|

|

3ab84a526d | ||

|

|

bdce96f912 | ||

|

|

4811b99a4b | ||

|

|

fb2a64c07a | ||

|

|

e023e4f2e2 | ||

|

|

0b16b1e0f4 | ||

|

|

59073ad7ac | ||

|

|

8248644c45 | ||

|

|

f38e6394c9 | ||

|

|

0aaa529c6b | ||

|

|

b81a6562a1 | ||

|

|

c5b10db23a | ||

|

|

d16e444643 | ||

|

|

8202468099 | ||

|

|

766e8bd20f | ||

|

|

1214ab5a8c | ||

|

|

ebddbb25f8 | ||

|

|

59545e1110 | ||

|

|

500e090b11 | ||

|

|

a75ee555fa | ||

|

|

6a8c2164cd | ||

|

|

7f7efa325a | ||

|

|

9ba6cb08fc | ||

|

|

1872271a2d | ||

|

|

813b50864a | ||

|

|

b18cefe320 | ||

|

|

a54c359fcf | ||

|

|

8d83221a4a | ||

|

|

1879000720 | ||

|

|

ba92649a98 | ||

|

|

d2276dcaae | ||

|

|

25c9d20f3d | ||

|

|

0d853577df | ||

|

|

f91f3d8692 | ||

|

|

0f7cad8dfa | ||

|

|

db1a1e7ef0 | ||

|

|

e7de80a059 |

@@ -90,6 +90,9 @@ Reference: `.github/workflows/release.yml`

|

|||||||

- Action: Automatically updates the plugin code and metadata on OpenWebUI.com using `scripts/publish_plugin.py`.

|

- Action: Automatically updates the plugin code and metadata on OpenWebUI.com using `scripts/publish_plugin.py`.

|

||||||

- **Auto-Sync**: If a local plugin has no ID but matches an existing published plugin by **Title**, the script will automatically fetch the ID, update the local file, and proceed with the update.

|

- **Auto-Sync**: If a local plugin has no ID but matches an existing published plugin by **Title**, the script will automatically fetch the ID, update the local file, and proceed with the update.

|

||||||

- Requirement: `OPENWEBUI_API_KEY` secret must be set.

|

- Requirement: `OPENWEBUI_API_KEY` secret must be set.

|

||||||

|

- **README Link**: When announcing a release, always include the GitHub README URL for the plugin:

|

||||||

|

- Format: `https://github.com/Fu-Jie/awesome-openwebui/blob/main/plugins/{type}/{name}/README.md`

|

||||||

|

- Example: `https://github.com/Fu-Jie/awesome-openwebui/blob/main/plugins/filters/folder-memory/README.md`

|

||||||

|

|

||||||

### Pull Request Check

|

### Pull Request Check

|

||||||

- Workflow: `.github/workflows/plugin-version-check.yml`

|

- Workflow: `.github/workflows/plugin-version-check.yml`

|

||||||

|

|||||||

19

.github/copilot-instructions.md

vendored

19

.github/copilot-instructions.md

vendored

@@ -100,13 +100,14 @@ description: 插件功能的简短描述。Brief description of plugin functiona

|

|||||||

| `author_url` | 作者主页链接 | `https://github.com/Fu-Jie/awesome-openwebui` |

|

| `author_url` | 作者主页链接 | `https://github.com/Fu-Jie/awesome-openwebui` |

|

||||||

| `funding_url` | 赞助/项目链接 | `https://github.com/open-webui` |

|

| `funding_url` | 赞助/项目链接 | `https://github.com/open-webui` |

|

||||||

| `version` | 语义化版本号 | `0.1.0`, `1.2.3` |

|

| `version` | 语义化版本号 | `0.1.0`, `1.2.3` |

|

||||||

| `icon_url` | 图标 (Base64 编码的 SVG) | 见下方图标规范 |

|

| `icon_url` | 图标 (Base64 编码的 SVG) | 仅 Action 插件**必须**提供。其他类型可选。 |

|

||||||

| `requirements` | 额外依赖 (仅 OpenWebUI 环境未安装的) | `python-docx==1.1.2` |

|

| `requirements` | 额外依赖 (仅 OpenWebUI 环境未安装的) | `python-docx==1.1.2` |

|

||||||

| `description` | 功能描述 | `将对话导出为 Word 文档` |

|

| `description` | 功能描述 | `将对话导出为 Word 文档` |

|

||||||

|

|

||||||

#### 图标规范 (Icon Guidelines)

|

#### 图标规范 (Icon Guidelines)

|

||||||

|

|

||||||

- 图标来源:从 [Lucide Icons](https://lucide.dev/icons/) 获取符合插件功能的图标

|

- 图标来源:从 [Lucide Icons](https://lucide.dev/icons/) 获取符合插件功能的图标

|

||||||

|

- 适用范围:Action 插件**必须**提供,其他插件可选

|

||||||

- 格式:Base64 编码的 SVG

|

- 格式:Base64 编码的 SVG

|

||||||

- 获取方法:从 Lucide 下载 SVG,然后使用 Base64 编码

|

- 获取方法:从 Lucide 下载 SVG,然后使用 Base64 编码

|

||||||

- 示例格式:

|

- 示例格式:

|

||||||

@@ -822,6 +823,22 @@ Filter 实例是**单例 (Singleton)**。

|

|||||||

|

|

||||||

#### Commit Message 规范

|

#### Commit Message 规范

|

||||||

使用 Conventional Commits 格式 (`feat`, `fix`, `docs`, etc.)。

|

使用 Conventional Commits 格式 (`feat`, `fix`, `docs`, etc.)。

|

||||||

|

**必须**在提交标题与正文中清晰描述变更内容,确保在 Release 页面可读且可追踪。

|

||||||

|

|

||||||

|

要求:

|

||||||

|

- 标题必须包含“做了什么”与影响范围(避免含糊词)。

|

||||||

|

- 正文必须列出关键变更点(1-3 条),与实际改动一一对应。

|

||||||

|

- 若影响用户或插件行为,必须在正文标明影响与迁移说明。

|

||||||

|

|

||||||

|

推荐格式:

|

||||||

|

- `feat(actions): add export settings panel`

|

||||||

|

- `fix(filters): handle empty metadata to avoid crash`

|

||||||

|

- `docs(plugins): update bilingual README structure`

|

||||||

|

|

||||||

|

正文示例:

|

||||||

|

- Add valves for export format selection

|

||||||

|

- Update README/README_CN to include What's New section

|

||||||

|

- Migration: default TITLE_SOURCE changed to chat_title

|

||||||

|

|

||||||

### 4. 🤖 Git Operations (Agent Rules)

|

### 4. 🤖 Git Operations (Agent Rules)

|

||||||

|

|

||||||

|

|||||||

29

README.md

29

README.md

@@ -10,28 +10,28 @@ A collection of enhancements, plugins, and prompts for [OpenWebUI](https://githu

|

|||||||

<!-- STATS_START -->

|

<!-- STATS_START -->

|

||||||

## 📊 Community Stats

|

## 📊 Community Stats

|

||||||

|

|

||||||

> 🕐 Auto-updated: 2026-01-19 18:11

|

> 🕐 Auto-updated: 2026-01-26 15:14

|

||||||

|

|

||||||

| 👤 Author | 👥 Followers | ⭐ Points | 🏆 Contributions |

|

| 👤 Author | 👥 Followers | ⭐ Points | 🏆 Contributions |

|

||||||

|:---:|:---:|:---:|:---:|

|

|:---:|:---:|:---:|:---:|

|

||||||

| [Fu-Jie](https://openwebui.com/u/Fu-Jie) | **133** | **134** | **25** |

|

| [Fu-Jie](https://openwebui.com/u/Fu-Jie) | **158** | **152** | **31** |

|

||||||

|

|

||||||

| 📝 Posts | ⬇️ Downloads | 👁️ Views | 👍 Upvotes | 💾 Saves |

|

| 📝 Posts | ⬇️ Downloads | 👁️ Views | 👍 Upvotes | 💾 Saves |

|

||||||

|:---:|:---:|:---:|:---:|:---:|

|

|:---:|:---:|:---:|:---:|:---:|

|

||||||

| **16** | **1792** | **21276** | **120** | **135** |

|

| **19** | **2388** | **27294** | **138** | **183** |

|

||||||

|

|

||||||

### 🔥 Top 6 Popular Plugins

|

### 🔥 Top 6 Popular Plugins

|

||||||

|

|

||||||

> 🕐 Auto-updated: 2026-01-19 18:11

|

> 🕐 Auto-updated: 2026-01-26 15:14

|

||||||

|

|

||||||

| Rank | Plugin | Version | Downloads | Views | Updated |

|

| Rank | Plugin | Version | Downloads | Views | Updated |

|

||||||

|:---:|------|:---:|:---:|:---:|:---:|

|

|:---:|------|:---:|:---:|:---:|:---:|

|

||||||

| 🥇 | [Smart Mind Map](https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a) | 0.9.1 | 532 | 4822 | 2026-01-17 |

|

| 🥇 | [Smart Mind Map](https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a) | 0.9.1 | 629 | 5600 | 2026-01-17 |

|

||||||

| 🥈 | [📊 Smart Infographic (AntV)](https://openwebui.com/posts/smart_infographic_ad6f0c7f) | 1.4.9 | 260 | 2514 | 2026-01-18 |

|

| 🥈 | [Smart Infographic](https://openwebui.com/posts/smart_infographic_ad6f0c7f) | 1.4.9 | 410 | 3621 | 2026-01-25 |

|

||||||

| 🥉 | [Export to Excel](https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d) | 0.3.7 | 209 | 800 | 2026-01-07 |

|

| 🥉 | [Export to Excel](https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d) | 0.3.7 | 255 | 1039 | 2026-01-07 |

|

||||||

| 4️⃣ | [Async Context Compression](https://openwebui.com/posts/async_context_compression_b1655bc8) | 1.1.3 | 180 | 1975 | 2026-01-17 |

|

| 4️⃣ | [Export to Word (Enhanced)](https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315) | 0.4.3 | 229 | 1839 | 2026-01-17 |

|

||||||

| 5️⃣ | [Export to Word (Enhanced)](https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315) | 0.4.3 | 158 | 1377 | 2026-01-17 |

|

| 5️⃣ | [Async Context Compression](https://openwebui.com/posts/async_context_compression_b1655bc8) | 1.2.2 | 227 | 2461 | 2026-01-21 |

|

||||||

| 6️⃣ | [Flash Card](https://openwebui.com/posts/flash_card_65a2ea8f) | 0.2.4 | 138 | 2329 | 2026-01-17 |

|

| 6️⃣ | [Flash Card](https://openwebui.com/posts/flash_card_65a2ea8f) | 0.2.4 | 165 | 2674 | 2026-01-17 |

|

||||||

|

|

||||||

*See full stats in [Community Stats Report](./docs/community-stats.md)*

|

*See full stats in [Community Stats Report](./docs/community-stats.md)*

|

||||||

<!-- STATS_END -->

|

<!-- STATS_END -->

|

||||||

@@ -43,6 +43,7 @@ A collection of enhancements, plugins, and prompts for [OpenWebUI](https://githu

|

|||||||

Located in the `plugins/` directory, containing Python-based enhancements:

|

Located in the `plugins/` directory, containing Python-based enhancements:

|

||||||

|

|

||||||

#### Actions

|

#### Actions

|

||||||

|

|

||||||

- **Smart Mind Map** (`smart-mind-map`): Generates interactive mind maps from text.

|

- **Smart Mind Map** (`smart-mind-map`): Generates interactive mind maps from text.

|

||||||

- **Smart Infographic** (`infographic`): Transforms text into professional infographics using AntV.

|

- **Smart Infographic** (`infographic`): Transforms text into professional infographics using AntV.

|

||||||

- **Flash Card** (`flash-card`): Quickly generates beautiful flashcards for learning.

|

- **Flash Card** (`flash-card`): Quickly generates beautiful flashcards for learning.

|

||||||

@@ -51,11 +52,18 @@ Located in the `plugins/` directory, containing Python-based enhancements:

|

|||||||

- **Export to Word** (`export_to_docx`): Exports chat history to Word documents.

|

- **Export to Word** (`export_to_docx`): Exports chat history to Word documents.

|

||||||

|

|

||||||

#### Filters

|

#### Filters

|

||||||

|

|

||||||

- **Async Context Compression** (`async-context-compression`): Optimizes token usage via context compression.

|

- **Async Context Compression** (`async-context-compression`): Optimizes token usage via context compression.

|

||||||

- **Context Enhancement** (`context_enhancement_filter`): Enhances chat context.

|

- **Context Enhancement** (`context_enhancement_filter`): Enhances chat context.

|

||||||

|

- **Folder Memory** (`folder-memory`): Automatically extracts project rules from conversations and injects them into the folder's system prompt.

|

||||||

- **Markdown Normalizer** (`markdown_normalizer`): Fixes common Markdown formatting issues in LLM outputs.

|

- **Markdown Normalizer** (`markdown_normalizer`): Fixes common Markdown formatting issues in LLM outputs.

|

||||||

|

|

||||||

|

#### Pipes

|

||||||

|

|

||||||

|

- **GitHub Copilot SDK** (`github-copilot-sdk`): Official GitHub Copilot SDK integration. Supports dynamic models, multi-turn conversation, streaming, multimodal input, and infinite sessions.

|

||||||

|

|

||||||

#### Pipelines

|

#### Pipelines

|

||||||

|

|

||||||

- **MoE Prompt Refiner** (`moe_prompt_refiner`): Refines prompts for Mixture of Experts (MoE) summary requests to generate high-quality comprehensive reports.

|

- **MoE Prompt Refiner** (`moe_prompt_refiner`): Refines prompts for Mixture of Experts (MoE) summary requests to generate high-quality comprehensive reports.

|

||||||

|

|

||||||

### 🎯 Prompts

|

### 🎯 Prompts

|

||||||

@@ -100,6 +108,7 @@ This project is a collection of resources and does not require a Python environm

|

|||||||

### Contributing

|

### Contributing

|

||||||

|

|

||||||

If you have great prompts or plugins to share:

|

If you have great prompts or plugins to share:

|

||||||

|

|

||||||

1. Fork this repository.

|

1. Fork this repository.

|

||||||

2. Add your files to the appropriate `prompts/` or `plugins/` directory.

|

2. Add your files to the appropriate `prompts/` or `plugins/` directory.

|

||||||

3. Submit a Pull Request.

|

3. Submit a Pull Request.

|

||||||

|

|||||||

27

README_CN.md

27

README_CN.md

@@ -7,28 +7,28 @@ OpenWebUI 增强功能集合。包含个人开发与收集的插件、提示词

|

|||||||

<!-- STATS_START -->

|

<!-- STATS_START -->

|

||||||

## 📊 社区统计

|

## 📊 社区统计

|

||||||

|

|

||||||

> 🕐 自动更新于 2026-01-19 18:11

|

> 🕐 自动更新于 2026-01-26 15:14

|

||||||

|

|

||||||

| 👤 作者 | 👥 粉丝 | ⭐ 积分 | 🏆 贡献 |

|

| 👤 作者 | 👥 粉丝 | ⭐ 积分 | 🏆 贡献 |

|

||||||

|:---:|:---:|:---:|:---:|

|

|:---:|:---:|:---:|:---:|

|

||||||

| [Fu-Jie](https://openwebui.com/u/Fu-Jie) | **133** | **134** | **25** |

|

| [Fu-Jie](https://openwebui.com/u/Fu-Jie) | **158** | **152** | **31** |

|

||||||

|

|

||||||

| 📝 发布 | ⬇️ 下载 | 👁️ 浏览 | 👍 点赞 | 💾 收藏 |

|

| 📝 发布 | ⬇️ 下载 | 👁️ 浏览 | 👍 点赞 | 💾 收藏 |

|

||||||

|:---:|:---:|:---:|:---:|:---:|

|

|:---:|:---:|:---:|:---:|:---:|

|

||||||

| **16** | **1792** | **21276** | **120** | **135** |

|

| **19** | **2388** | **27294** | **138** | **183** |

|

||||||

|

|

||||||

### 🔥 热门插件 Top 6

|

### 🔥 热门插件 Top 6

|

||||||

|

|

||||||

> 🕐 自动更新于 2026-01-19 18:11

|

> 🕐 自动更新于 2026-01-26 15:14

|

||||||

|

|

||||||

| 排名 | 插件 | 版本 | 下载 | 浏览 | 更新日期 |

|

| 排名 | 插件 | 版本 | 下载 | 浏览 | 更新日期 |

|

||||||

|:---:|------|:---:|:---:|:---:|:---:|

|

|:---:|------|:---:|:---:|:---:|:---:|

|

||||||

| 🥇 | [Smart Mind Map](https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a) | 0.9.1 | 532 | 4822 | 2026-01-17 |

|

| 🥇 | [Smart Mind Map](https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a) | 0.9.1 | 629 | 5600 | 2026-01-17 |

|

||||||

| 🥈 | [📊 Smart Infographic (AntV)](https://openwebui.com/posts/smart_infographic_ad6f0c7f) | 1.4.9 | 260 | 2514 | 2026-01-18 |

|

| 🥈 | [Smart Infographic](https://openwebui.com/posts/smart_infographic_ad6f0c7f) | 1.4.9 | 410 | 3621 | 2026-01-25 |

|

||||||

| 🥉 | [Export to Excel](https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d) | 0.3.7 | 209 | 800 | 2026-01-07 |

|

| 🥉 | [Export to Excel](https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d) | 0.3.7 | 255 | 1039 | 2026-01-07 |

|

||||||

| 4️⃣ | [Async Context Compression](https://openwebui.com/posts/async_context_compression_b1655bc8) | 1.1.3 | 180 | 1975 | 2026-01-17 |

|

| 4️⃣ | [Export to Word (Enhanced)](https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315) | 0.4.3 | 229 | 1839 | 2026-01-17 |

|

||||||

| 5️⃣ | [Export to Word (Enhanced)](https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315) | 0.4.3 | 158 | 1377 | 2026-01-17 |

|

| 5️⃣ | [Async Context Compression](https://openwebui.com/posts/async_context_compression_b1655bc8) | 1.2.2 | 227 | 2461 | 2026-01-21 |

|

||||||

| 6️⃣ | [Flash Card](https://openwebui.com/posts/flash_card_65a2ea8f) | 0.2.4 | 138 | 2329 | 2026-01-17 |

|

| 6️⃣ | [Flash Card](https://openwebui.com/posts/flash_card_65a2ea8f) | 0.2.4 | 165 | 2674 | 2026-01-17 |

|

||||||

|

|

||||||

*完整统计请查看 [社区统计报告](./docs/community-stats.zh.md)*

|

*完整统计请查看 [社区统计报告](./docs/community-stats.zh.md)*

|

||||||

<!-- STATS_END -->

|

<!-- STATS_END -->

|

||||||

@@ -40,6 +40,7 @@ OpenWebUI 增强功能集合。包含个人开发与收集的插件、提示词

|

|||||||

位于 `plugins/` 目录,包含各类 Python 编写的功能增强插件:

|

位于 `plugins/` 目录,包含各类 Python 编写的功能增强插件:

|

||||||

|

|

||||||

#### Actions (交互增强)

|

#### Actions (交互增强)

|

||||||

|

|

||||||

- **Smart Mind Map** (`smart-mind-map`): 智能分析文本并生成交互式思维导图。

|

- **Smart Mind Map** (`smart-mind-map`): 智能分析文本并生成交互式思维导图。

|

||||||

- **Smart Infographic** (`infographic`): 基于 AntV 的智能信息图生成工具。

|

- **Smart Infographic** (`infographic`): 基于 AntV 的智能信息图生成工具。

|

||||||

- **Flash Card** (`flash-card`): 快速生成精美的学习记忆卡片。

|

- **Flash Card** (`flash-card`): 快速生成精美的学习记忆卡片。

|

||||||

@@ -48,17 +49,22 @@ OpenWebUI 增强功能集合。包含个人开发与收集的插件、提示词

|

|||||||

- **Export to Word** (`export_to_docx`): 将对话内容导出为 Word 文档。

|

- **Export to Word** (`export_to_docx`): 将对话内容导出为 Word 文档。

|

||||||

|

|

||||||

#### Filters (消息处理)

|

#### Filters (消息处理)

|

||||||

|

|

||||||

- **Async Context Compression** (`async-context-compression`): 异步上下文压缩,优化 Token 使用。

|

- **Async Context Compression** (`async-context-compression`): 异步上下文压缩,优化 Token 使用。

|

||||||

- **Context Enhancement** (`context_enhancement_filter`): 上下文增强过滤器。

|

- **Context Enhancement** (`context_enhancement_filter`): 上下文增强过滤器。

|

||||||

|

- **Folder Memory** (`folder-memory`): 自动从对话中提取项目规则并注入到文件夹系统提示词中。

|

||||||

- **Gemini Manifold Companion** (`gemini_manifold_companion`): Gemini Manifold 配套增强。

|

- **Gemini Manifold Companion** (`gemini_manifold_companion`): Gemini Manifold 配套增强。

|

||||||

- **Gemini Multimodal Filter** (`web_gemini_multimodel_filter`): 为任意模型提供多模态能力(PDF、Office、视频等),支持智能路由和字幕精修。

|

- **Gemini Multimodal Filter** (`web_gemini_multimodel_filter`): 为任意模型提供多模态能力(PDF、Office、视频等),支持智能路由和字幕精修。

|

||||||

- **Markdown Normalizer** (`markdown_normalizer`): 修复 LLM 输出中常见的 Markdown 格式问题。

|

- **Markdown Normalizer** (`markdown_normalizer`): 修复 LLM 输出中常见的 Markdown 格式问题。

|

||||||

- **Multi-Model Context Merger** (`multi_model_context_merger`): 自动合并并注入多模型回答的上下文。

|

- **Multi-Model Context Merger** (`multi_model_context_merger`): 自动合并并注入多模型回答的上下文。

|

||||||

|

|

||||||

#### Pipes (模型管道)

|

#### Pipes (模型管道)

|

||||||

|

|

||||||

|

- **GitHub Copilot SDK** (`github-copilot-sdk`): GitHub Copilot SDK 官方集成。支持动态模型、多轮对话、流式输出、图片输入及无限会话。

|

||||||

- **Gemini Manifold** (`gemini_mainfold`): 集成 Gemini 模型的管道。

|

- **Gemini Manifold** (`gemini_mainfold`): 集成 Gemini 模型的管道。

|

||||||

|

|

||||||

#### Pipelines (工作流管道)

|

#### Pipelines (工作流管道)

|

||||||

|

|

||||||

- **MoE Prompt Refiner** (`moe_prompt_refiner`): 优化多模型 (MoE) 汇总请求的提示词,生成高质量的综合报告。

|

- **MoE Prompt Refiner** (`moe_prompt_refiner`): 优化多模型 (MoE) 汇总请求的提示词,生成高质量的综合报告。

|

||||||

|

|

||||||

### 🎯 提示词 (Prompts)

|

### 🎯 提示词 (Prompts)

|

||||||

@@ -106,6 +112,7 @@ OpenWebUI 增强功能集合。包含个人开发与收集的插件、提示词

|

|||||||

### 贡献代码

|

### 贡献代码

|

||||||

|

|

||||||

如果你有优质的提示词或插件想要分享:

|

如果你有优质的提示词或插件想要分享:

|

||||||

|

|

||||||

1. Fork 本仓库。

|

1. Fork 本仓库。

|

||||||

2. 将你的文件添加到对应的 `prompts/` 或 `plugins/` 目录。

|

2. 将你的文件添加到对应的 `prompts/` 或 `plugins/` 目录。

|

||||||

3. 提交 Pull Request。

|

3. 提交 Pull Request。

|

||||||

|

|||||||

@@ -1,7 +1,7 @@

|

|||||||

{

|

{

|

||||||

"schemaVersion": 1,

|

"schemaVersion": 1,

|

||||||

"label": "downloads",

|

"label": "downloads",

|

||||||

"message": "1.8k",

|

"message": "2.4k",

|

||||||

"color": "blue",

|

"color": "blue",

|

||||||

"namedLogo": "openwebui"

|

"namedLogo": "openwebui"

|

||||||

}

|

}

|

||||||

@@ -1,6 +1,6 @@

|

|||||||

{

|

{

|

||||||

"schemaVersion": 1,

|

"schemaVersion": 1,

|

||||||

"label": "followers",

|

"label": "followers",

|

||||||

"message": "133",

|

"message": "158",

|

||||||

"color": "blue"

|

"color": "blue"

|

||||||

}

|

}

|

||||||

@@ -1,6 +1,6 @@

|

|||||||

{

|

{

|

||||||

"schemaVersion": 1,

|

"schemaVersion": 1,

|

||||||

"label": "plugins",

|

"label": "plugins",

|

||||||

"message": "16",

|

"message": "19",

|

||||||

"color": "green"

|

"color": "green"

|

||||||

}

|

}

|

||||||

@@ -1,6 +1,6 @@

|

|||||||

{

|

{

|

||||||

"schemaVersion": 1,

|

"schemaVersion": 1,

|

||||||

"label": "points",

|

"label": "points",

|

||||||

"message": "134",

|

"message": "152",

|

||||||

"color": "orange"

|

"color": "orange"

|

||||||

}

|

}

|

||||||

@@ -1,6 +1,6 @@

|

|||||||

{

|

{

|

||||||

"schemaVersion": 1,

|

"schemaVersion": 1,

|

||||||

"label": "upvotes",

|

"label": "upvotes",

|

||||||

"message": "120",

|

"message": "138",

|

||||||

"color": "brightgreen"

|

"color": "brightgreen"

|

||||||

}

|

}

|

||||||

@@ -1,14 +1,16 @@

|

|||||||

{

|

{

|

||||||

"total_posts": 16,

|

"total_posts": 19,

|

||||||

"total_downloads": 1792,

|

"total_downloads": 2388,

|

||||||

"total_views": 21276,

|

"total_views": 27294,

|

||||||

"total_upvotes": 120,

|

"total_upvotes": 138,

|

||||||

"total_downvotes": 2,

|

"total_downvotes": 2,

|

||||||

"total_saves": 135,

|

"total_saves": 183,

|

||||||

"total_comments": 24,

|

"total_comments": 33,

|

||||||

"by_type": {

|

"by_type": {

|

||||||

|

"pipe": 1,

|

||||||

"action": 14,

|

"action": 14,

|

||||||

"unknown": 2

|

"unknown": 3,

|

||||||

|

"filter": 1

|

||||||

},

|

},

|

||||||

"posts": [

|

"posts": [

|

||||||

{

|

{

|

||||||

@@ -18,29 +20,29 @@

|

|||||||

"version": "0.9.1",

|

"version": "0.9.1",

|

||||||

"author": "Fu-Jie",

|

"author": "Fu-Jie",

|

||||||

"description": "Intelligently analyzes text content and generates interactive mind maps to help users structure and visualize knowledge.",

|

"description": "Intelligently analyzes text content and generates interactive mind maps to help users structure and visualize knowledge.",

|

||||||

"downloads": 532,

|

"downloads": 629,

|

||||||

"views": 4822,

|

"views": 5600,

|

||||||

"upvotes": 15,

|

"upvotes": 16,

|

||||||

"saves": 28,

|

"saves": 37,

|

||||||

"comments": 11,

|

"comments": 11,

|

||||||

"created_at": "2025-12-30",

|

"created_at": "2025-12-30",

|

||||||

"updated_at": "2026-01-17",

|

"updated_at": "2026-01-17",

|

||||||

"url": "https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a"

|

"url": "https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a"

|

||||||

},

|

},

|

||||||

{

|

{

|

||||||

"title": "📊 Smart Infographic (AntV)",

|

"title": "Smart Infographic",

|

||||||

"slug": "smart_infographic_ad6f0c7f",

|

"slug": "smart_infographic_ad6f0c7f",

|

||||||

"type": "action",

|

"type": "action",

|

||||||

"version": "1.4.9",

|

"version": "1.4.9",

|

||||||

"author": "Fu-Jie",

|

"author": "Fu-Jie",

|

||||||

"description": "AI-powered infographic generator based on AntV Infographic. Supports professional templates, auto-icon matching, and SVG/PNG downloads.",

|

"description": "AI-powered infographic generator based on AntV Infographic. Supports professional templates, auto-icon matching, and SVG/PNG downloads.",

|

||||||

"downloads": 260,

|

"downloads": 410,

|

||||||

"views": 2514,

|

"views": 3621,

|

||||||

"upvotes": 14,

|

"upvotes": 18,

|

||||||

"saves": 20,

|

"saves": 27,

|

||||||

"comments": 3,

|

"comments": 7,

|

||||||

"created_at": "2025-12-28",

|

"created_at": "2025-12-28",

|

||||||

"updated_at": "2026-01-18",

|

"updated_at": "2026-01-25",

|

||||||

"url": "https://openwebui.com/posts/smart_infographic_ad6f0c7f"

|

"url": "https://openwebui.com/posts/smart_infographic_ad6f0c7f"

|

||||||

},

|

},

|

||||||

{

|

{

|

||||||

@@ -50,31 +52,15 @@

|

|||||||

"version": "0.3.7",

|

"version": "0.3.7",

|

||||||

"author": "Fu-Jie",

|

"author": "Fu-Jie",

|

||||||

"description": "Extracts tables from chat messages and exports them to Excel (.xlsx) files with smart formatting.",

|

"description": "Extracts tables from chat messages and exports them to Excel (.xlsx) files with smart formatting.",

|

||||||

"downloads": 209,

|

"downloads": 255,

|

||||||

"views": 800,

|

"views": 1039,

|

||||||

"upvotes": 4,

|

"upvotes": 4,

|

||||||

"saves": 5,

|

"saves": 6,

|

||||||

"comments": 0,

|

"comments": 0,

|

||||||

"created_at": "2025-05-30",

|

"created_at": "2025-05-30",

|

||||||

"updated_at": "2026-01-07",

|

"updated_at": "2026-01-07",

|

||||||

"url": "https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d"

|

"url": "https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d"

|

||||||

},

|

},

|

||||||

{

|

|

||||||

"title": "Async Context Compression",

|

|

||||||

"slug": "async_context_compression_b1655bc8",

|

|

||||||

"type": "action",

|

|

||||||

"version": "1.1.3",

|

|

||||||

"author": "Fu-Jie",

|

|

||||||

"description": "Reduces token consumption in long conversations while maintaining coherence through intelligent summarization and message compression.",

|

|

||||||

"downloads": 180,

|

|

||||||

"views": 1975,

|

|

||||||

"upvotes": 9,

|

|

||||||

"saves": 19,

|

|

||||||

"comments": 0,

|

|

||||||

"created_at": "2025-11-08",

|

|

||||||

"updated_at": "2026-01-17",

|

|

||||||

"url": "https://openwebui.com/posts/async_context_compression_b1655bc8"

|

|

||||||

},

|

|

||||||

{

|

{

|

||||||

"title": "Export to Word (Enhanced)",

|

"title": "Export to Word (Enhanced)",

|

||||||

"slug": "export_to_word_enhanced_formatting_fca6a315",

|

"slug": "export_to_word_enhanced_formatting_fca6a315",

|

||||||

@@ -82,15 +68,31 @@

|

|||||||

"version": "0.4.3",

|

"version": "0.4.3",

|

||||||

"author": "Fu-Jie",

|

"author": "Fu-Jie",

|

||||||

"description": "Export current conversation from Markdown to Word (.docx) with Mermaid diagrams rendered client-side (Mermaid.js, SVG+PNG), LaTeX math, real hyperlinks, improved tables, syntax highlighting, and blockquote support.",

|

"description": "Export current conversation from Markdown to Word (.docx) with Mermaid diagrams rendered client-side (Mermaid.js, SVG+PNG), LaTeX math, real hyperlinks, improved tables, syntax highlighting, and blockquote support.",

|

||||||

"downloads": 158,

|

"downloads": 229,

|

||||||

"views": 1377,

|

"views": 1839,

|

||||||

"upvotes": 8,

|

"upvotes": 8,

|

||||||

"saves": 16,

|

"saves": 21,

|

||||||

"comments": 0,

|

"comments": 0,

|

||||||

"created_at": "2026-01-03",

|

"created_at": "2026-01-03",

|

||||||

"updated_at": "2026-01-17",

|

"updated_at": "2026-01-17",

|

||||||

"url": "https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315"

|

"url": "https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315"

|

||||||

},

|

},

|

||||||

|

{

|

||||||

|

"title": "Async Context Compression",

|

||||||

|

"slug": "async_context_compression_b1655bc8",

|

||||||

|

"type": "action",

|

||||||

|

"version": "1.2.2",

|

||||||

|

"author": "Fu-Jie",

|

||||||

|

"description": "Reduces token consumption in long conversations while maintaining coherence through intelligent summarization and message compression.",

|

||||||

|

"downloads": 227,

|

||||||

|

"views": 2461,

|

||||||

|

"upvotes": 9,

|

||||||

|

"saves": 27,

|

||||||

|

"comments": 0,

|

||||||

|

"created_at": "2025-11-08",

|

||||||

|

"updated_at": "2026-01-21",

|

||||||

|

"url": "https://openwebui.com/posts/async_context_compression_b1655bc8"

|

||||||

|

},

|

||||||

{

|

{

|

||||||

"title": "Flash Card",

|

"title": "Flash Card",

|

||||||

"slug": "flash_card_65a2ea8f",

|

"slug": "flash_card_65a2ea8f",

|

||||||

@@ -98,10 +100,10 @@

|

|||||||

"version": "0.2.4",

|

"version": "0.2.4",

|

||||||

"author": "Fu-Jie",

|

"author": "Fu-Jie",

|

||||||

"description": "Quickly generates beautiful flashcards from text, extracting key points and categories.",

|

"description": "Quickly generates beautiful flashcards from text, extracting key points and categories.",

|

||||||

"downloads": 138,

|

"downloads": 165,

|

||||||

"views": 2329,

|

"views": 2674,

|

||||||

"upvotes": 10,

|

"upvotes": 11,

|

||||||

"saves": 10,

|

"saves": 13,

|

||||||

"comments": 2,

|

"comments": 2,

|

||||||

"created_at": "2025-12-30",

|

"created_at": "2025-12-30",

|

||||||

"updated_at": "2026-01-17",

|

"updated_at": "2026-01-17",

|

||||||

@@ -111,16 +113,16 @@

|

|||||||

"title": "Markdown Normalizer",

|

"title": "Markdown Normalizer",

|

||||||

"slug": "markdown_normalizer_baaa8732",

|

"slug": "markdown_normalizer_baaa8732",

|

||||||

"type": "action",

|

"type": "action",

|

||||||

"version": "1.2.3",

|

"version": "1.2.4",

|

||||||

"author": "Fu-Jie",

|

"author": "Fu-Jie",

|

||||||

"description": "A content normalizer filter that fixes common Markdown formatting issues in LLM outputs, such as broken code blocks, LaTeX formulas, and list formatting.",

|

"description": "A content normalizer filter that fixes common Markdown formatting issues in LLM outputs, such as broken code blocks, LaTeX formulas, and list formatting.",

|

||||||

"downloads": 84,

|

"downloads": 148,

|

||||||

"views": 2100,

|

"views": 2762,

|

||||||

"upvotes": 10,

|

"upvotes": 10,

|

||||||

"saves": 17,

|

"saves": 20,

|

||||||

"comments": 5,

|

"comments": 5,

|

||||||

"created_at": "2026-01-12",

|

"created_at": "2026-01-12",

|

||||||

"updated_at": "2026-01-17",

|

"updated_at": "2026-01-19",

|

||||||

"url": "https://openwebui.com/posts/markdown_normalizer_baaa8732"

|

"url": "https://openwebui.com/posts/markdown_normalizer_baaa8732"

|

||||||

},

|

},

|

||||||

{

|

{

|

||||||

@@ -130,10 +132,10 @@

|

|||||||

"version": "1.0.0",

|

"version": "1.0.0",

|

||||||

"author": "Fu-Jie",

|

"author": "Fu-Jie",

|

||||||

"description": "A comprehensive thinking lens that dives deep into any content - from context to logic, insights, and action paths.",

|

"description": "A comprehensive thinking lens that dives deep into any content - from context to logic, insights, and action paths.",

|

||||||

"downloads": 68,

|

"downloads": 91,

|

||||||

"views": 663,

|

"views": 839,

|

||||||

"upvotes": 4,

|

"upvotes": 4,

|

||||||

"saves": 6,

|

"saves": 8,

|

||||||

"comments": 0,

|

"comments": 0,

|

||||||

"created_at": "2026-01-08",

|

"created_at": "2026-01-08",

|

||||||

"updated_at": "2026-01-08",

|

"updated_at": "2026-01-08",

|

||||||

@@ -146,11 +148,11 @@

|

|||||||

"version": "0.4.3",

|

"version": "0.4.3",

|

||||||

"author": "Fu-Jie",

|

"author": "Fu-Jie",

|

||||||

"description": "将对话导出为 Word (.docx),支持 Mermaid 图表 (客户端渲染 SVG+PNG)、LaTeX 数学公式、真实超链接、增强表格格式、代码高亮和引用块。",

|

"description": "将对话导出为 Word (.docx),支持 Mermaid 图表 (客户端渲染 SVG+PNG)、LaTeX 数学公式、真实超链接、增强表格格式、代码高亮和引用块。",

|

||||||

"downloads": 63,

|

"downloads": 87,

|

||||||

"views": 1305,

|

"views": 1614,

|

||||||

"upvotes": 11,

|

"upvotes": 11,

|

||||||

"saves": 3,

|

"saves": 4,

|

||||||

"comments": 1,

|

"comments": 4,

|

||||||

"created_at": "2026-01-04",

|

"created_at": "2026-01-04",

|

||||||

"updated_at": "2026-01-17",

|

"updated_at": "2026-01-17",

|

||||||

"url": "https://openwebui.com/posts/导出为_word_支持公式流程图表格和代码块_8a6306c0"

|

"url": "https://openwebui.com/posts/导出为_word_支持公式流程图表格和代码块_8a6306c0"

|

||||||

@@ -162,8 +164,8 @@

|

|||||||

"version": "1.4.9",

|

"version": "1.4.9",

|

||||||

"author": "Fu-Jie",

|

"author": "Fu-Jie",

|

||||||

"description": "基于 AntV Infographic 的智能信息图生成插件。支持多种专业模板,自动图标匹配,并提供 SVG/PNG 下载功能。",

|

"description": "基于 AntV Infographic 的智能信息图生成插件。支持多种专业模板,自动图标匹配,并提供 SVG/PNG 下载功能。",

|

||||||

"downloads": 42,

|

"downloads": 46,

|

||||||

"views": 683,

|

"views": 781,

|

||||||

"upvotes": 6,

|

"upvotes": 6,

|

||||||

"saves": 0,

|

"saves": 0,

|

||||||

"comments": 0,

|

"comments": 0,

|

||||||

@@ -178,15 +180,47 @@

|

|||||||

"version": "0.9.1",

|

"version": "0.9.1",

|

||||||

"author": "Fu-Jie",

|

"author": "Fu-Jie",

|

||||||

"description": "智能分析文本内容,生成交互式思维导图,帮助用户结构化和可视化知识。",

|

"description": "智能分析文本内容,生成交互式思维导图,帮助用户结构化和可视化知识。",

|

||||||

"downloads": 22,

|

"downloads": 27,

|

||||||

"views": 398,

|

"views": 447,

|

||||||

"upvotes": 3,

|

"upvotes": 4,

|

||||||

"saves": 1,

|

"saves": 1,

|

||||||

"comments": 0,

|

"comments": 0,

|

||||||

"created_at": "2025-12-31",

|

"created_at": "2025-12-31",

|

||||||

"updated_at": "2026-01-17",

|

"updated_at": "2026-01-17",

|

||||||

"url": "https://openwebui.com/posts/智能生成交互式思维导图帮助用户可视化知识_8d4b097b"

|

"url": "https://openwebui.com/posts/智能生成交互式思维导图帮助用户可视化知识_8d4b097b"

|

||||||

},

|

},

|

||||||

|

{

|

||||||

|

"title": "📂 Folder Memory – Auto-Evolving Project Context",

|

||||||

|

"slug": "folder_memory_auto_evolving_project_context_4a9875b2",

|

||||||

|

"type": "filter",

|

||||||

|

"version": "0.1.0",

|

||||||

|

"author": "Fu-Jie",

|

||||||

|

"description": "Automatically extracts project rules from conversations and injects them into the folder's system prompt.",

|

||||||

|

"downloads": 26,

|

||||||

|

"views": 725,

|

||||||

|

"upvotes": 3,

|

||||||

|

"saves": 4,

|

||||||

|

"comments": 0,

|

||||||

|

"created_at": "2026-01-20",

|

||||||

|

"updated_at": "2026-01-20",

|

||||||

|

"url": "https://openwebui.com/posts/folder_memory_auto_evolving_project_context_4a9875b2"

|

||||||

|

},

|

||||||

|

{

|

||||||

|

"title": "异步上下文压缩",

|

||||||

|

"slug": "异步上下文压缩_5c0617cb",

|

||||||

|

"type": "action",

|

||||||

|

"version": "1.2.2",

|

||||||

|

"author": "Fu-Jie",

|

||||||

|

"description": "通过智能摘要和消息压缩,降低长对话的 token 消耗,同时保持对话连贯性。",

|

||||||

|

"downloads": 20,

|

||||||

|

"views": 486,

|

||||||

|

"upvotes": 5,

|

||||||

|

"saves": 1,

|

||||||

|

"comments": 0,

|

||||||

|

"created_at": "2025-11-08",

|

||||||

|

"updated_at": "2026-01-21",

|

||||||

|

"url": "https://openwebui.com/posts/异步上下文压缩_5c0617cb"

|

||||||

|

},

|

||||||

{

|

{

|

||||||

"title": "闪记卡 (Flash Card)",

|

"title": "闪记卡 (Flash Card)",

|

||||||

"slug": "闪记卡生成插件_4a31eac3",

|

"slug": "闪记卡生成插件_4a31eac3",

|

||||||

@@ -194,31 +228,15 @@

|

|||||||

"version": "0.2.4",

|

"version": "0.2.4",

|

||||||

"author": "Fu-Jie",

|

"author": "Fu-Jie",

|

||||||

"description": "快速将文本提炼为精美的学习记忆卡片,支持核心要点提取与分类。",

|

"description": "快速将文本提炼为精美的学习记忆卡片,支持核心要点提取与分类。",

|

||||||

"downloads": 16,

|

"downloads": 19,

|

||||||

"views": 443,

|

"views": 507,

|

||||||

"upvotes": 5,

|

"upvotes": 6,

|

||||||

"saves": 1,

|

"saves": 1,

|

||||||

"comments": 0,

|

"comments": 0,

|

||||||

"created_at": "2025-12-30",

|

"created_at": "2025-12-30",

|

||||||

"updated_at": "2026-01-17",

|

"updated_at": "2026-01-17",

|

||||||

"url": "https://openwebui.com/posts/闪记卡生成插件_4a31eac3"

|

"url": "https://openwebui.com/posts/闪记卡生成插件_4a31eac3"

|

||||||

},

|

},

|

||||||

{

|

|

||||||

"title": "异步上下文压缩",

|

|

||||||

"slug": "异步上下文压缩_5c0617cb",

|

|

||||||

"type": "action",

|

|

||||||

"version": "1.1.3",

|

|

||||||

"author": "Fu-Jie",

|

|

||||||

"description": "通过智能摘要和消息压缩,降低长对话的 token 消耗,同时保持对话连贯性。",

|

|

||||||

"downloads": 14,

|

|

||||||

"views": 351,

|

|

||||||

"upvotes": 5,

|

|

||||||

"saves": 1,

|

|

||||||

"comments": 0,

|

|

||||||

"created_at": "2025-11-08",

|

|

||||||

"updated_at": "2026-01-17",

|

|

||||||

"url": "https://openwebui.com/posts/异步上下文压缩_5c0617cb"

|

|

||||||

},

|

|

||||||

{

|

{

|

||||||

"title": "精读",

|

"title": "精读",

|

||||||

"slug": "精读_99830b0f",

|

"slug": "精读_99830b0f",

|

||||||

@@ -226,8 +244,8 @@

|

|||||||

"version": "1.0.0",

|

"version": "1.0.0",

|

||||||

"author": "Fu-Jie",

|

"author": "Fu-Jie",

|

||||||

"description": "全方位的思维透镜 —— 从背景全景到逻辑脉络,从深度洞察到行动路径。",

|

"description": "全方位的思维透镜 —— 从背景全景到逻辑脉络,从深度洞察到行动路径。",

|

||||||

"downloads": 6,

|

"downloads": 9,

|

||||||

"views": 259,

|

"views": 306,

|

||||||

"upvotes": 3,

|

"upvotes": 3,

|

||||||

"saves": 1,

|

"saves": 1,

|

||||||

"comments": 0,

|

"comments": 0,

|

||||||

@@ -235,6 +253,38 @@

|

|||||||

"updated_at": "2026-01-08",

|

"updated_at": "2026-01-08",

|

||||||

"url": "https://openwebui.com/posts/精读_99830b0f"

|

"url": "https://openwebui.com/posts/精读_99830b0f"

|

||||||

},

|

},

|

||||||

|

{

|

||||||

|

"title": "GitHub Copilot Official SDK Pipe",

|

||||||

|

"slug": "github_copilot_official_sdk_pipe_ce96f7b4",

|

||||||

|

"type": "pipe",

|

||||||

|

"version": "0.1.1",

|

||||||

|

"author": "Fu-Jie",

|

||||||

|

"description": "Integrate GitHub Copilot SDK. Supports dynamic models, multi-turn conversation, streaming, multimodal input, and infinite sessions (context compaction).",

|

||||||

|

"downloads": 0,

|

||||||

|

"views": 8,

|

||||||

|

"upvotes": 1,

|

||||||

|

"saves": 0,

|

||||||

|

"comments": 0,

|

||||||

|

"created_at": "2026-01-26",

|

||||||

|

"updated_at": "2026-01-26",

|

||||||

|

"url": "https://openwebui.com/posts/github_copilot_official_sdk_pipe_ce96f7b4"

|

||||||

|

},

|

||||||

|

{

|

||||||

|

"title": "🚀 Open WebUI Prompt Plus: AI-Powered Prompt Manager",

|

||||||

|

"slug": "open_webui_prompt_plus_ai_powered_prompt_manager_s_15fa060e",

|

||||||

|

"type": "unknown",

|

||||||

|

"version": "",

|

||||||

|

"author": "",

|

||||||

|

"description": "",

|

||||||

|

"downloads": 0,

|

||||||

|

"views": 222,

|

||||||

|

"upvotes": 6,

|

||||||

|

"saves": 4,

|

||||||

|

"comments": 2,

|

||||||

|

"created_at": "2026-01-25",

|

||||||

|

"updated_at": "2026-01-25",

|

||||||

|

"url": "https://openwebui.com/posts/open_webui_prompt_plus_ai_powered_prompt_manager_s_15fa060e"

|

||||||

|

},

|

||||||

{

|

{

|

||||||

"title": "Review of Claude Haiku 4.5",

|

"title": "Review of Claude Haiku 4.5",

|

||||||

"slug": "review_of_claude_haiku_45_41b0db39",

|

"slug": "review_of_claude_haiku_45_41b0db39",

|

||||||

@@ -243,7 +293,7 @@

|

|||||||

"author": "",

|

"author": "",

|

||||||

"description": "",

|

"description": "",

|

||||||

"downloads": 0,

|

"downloads": 0,

|

||||||

"views": 59,

|

"views": 93,

|

||||||

"upvotes": 1,

|

"upvotes": 1,

|

||||||

"saves": 0,

|

"saves": 0,

|

||||||

"comments": 0,

|

"comments": 0,

|

||||||

@@ -259,9 +309,9 @@

|

|||||||

"author": "",

|

"author": "",

|

||||||

"description": "",

|

"description": "",

|

||||||

"downloads": 0,

|

"downloads": 0,

|

||||||

"views": 1198,

|

"views": 1270,

|

||||||

"upvotes": 12,

|

"upvotes": 12,

|

||||||

"saves": 7,

|

"saves": 8,

|

||||||

"comments": 2,

|

"comments": 2,

|

||||||

"created_at": "2026-01-10",

|

"created_at": "2026-01-10",

|

||||||

"updated_at": "2026-01-10",

|

"updated_at": "2026-01-10",

|

||||||

@@ -273,11 +323,11 @@

|

|||||||

"name": "Fu-Jie",

|

"name": "Fu-Jie",

|

||||||

"profile_url": "https://openwebui.com/u/Fu-Jie",

|

"profile_url": "https://openwebui.com/u/Fu-Jie",

|

||||||

"profile_image": "https://community.s3.openwebui.com/uploads/users/b15d1348-4347-42b4-b815-e053342d6cb0/profile_d9510745-4bd4-4f8f-a997-4a21847d9300.webp",

|

"profile_image": "https://community.s3.openwebui.com/uploads/users/b15d1348-4347-42b4-b815-e053342d6cb0/profile_d9510745-4bd4-4f8f-a997-4a21847d9300.webp",

|

||||||

"followers": 133,

|

"followers": 158,

|

||||||

"following": 2,

|

"following": 3,

|

||||||

"total_points": 134,

|

"total_points": 152,

|

||||||

"post_points": 118,

|

"post_points": 136,

|

||||||

"comment_points": 16,

|

"comment_points": 16,

|

||||||

"contributions": 25

|

"contributions": 31

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

@@ -1,40 +1,45 @@

|

|||||||

# 📊 OpenWebUI Community Stats Report

|

# 📊 OpenWebUI Community Stats Report

|

||||||

|

|

||||||

> 📅 Updated: 2026-01-19 18:11

|

> 📅 Updated: 2026-01-26 15:14

|

||||||

|

|

||||||

## 📈 Overview

|

## 📈 Overview

|

||||||

|

|

||||||

| Metric | Value |

|

| Metric | Value |

|

||||||

|------|------|

|

|------|------|

|

||||||

| 📝 Total Posts | 16 |

|

| 📝 Total Posts | 19 |

|

||||||

| ⬇️ Total Downloads | 1792 |

|

| ⬇️ Total Downloads | 2388 |

|

||||||

| 👁️ Total Views | 21276 |

|

| 👁️ Total Views | 27294 |

|

||||||

| 👍 Total Upvotes | 120 |

|

| 👍 Total Upvotes | 138 |

|

||||||

| 💾 Total Saves | 135 |

|

| 💾 Total Saves | 183 |

|

||||||

| 💬 Total Comments | 24 |

|

| 💬 Total Comments | 33 |

|

||||||

|

|

||||||

## 📂 By Type

|

## 📂 By Type

|

||||||

|

|

||||||

|

- **pipe**: 1

|

||||||

- **action**: 14

|

- **action**: 14

|

||||||

- **unknown**: 2

|

- **unknown**: 3

|

||||||

|

- **filter**: 1

|

||||||

|

|

||||||

## 📋 Posts List

|

## 📋 Posts List

|

||||||

|

|

||||||

| Rank | Title | Type | Version | Downloads | Views | Upvotes | Saves | Updated |

|

| Rank | Title | Type | Version | Downloads | Views | Upvotes | Saves | Updated |

|

||||||

|:---:|------|:---:|:---:|:---:|:---:|:---:|:---:|:---:|

|

|:---:|------|:---:|:---:|:---:|:---:|:---:|:---:|:---:|

|

||||||

| 1 | [Smart Mind Map](https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a) | action | 0.9.1 | 532 | 4822 | 15 | 28 | 2026-01-17 |

|

| 1 | [Smart Mind Map](https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a) | action | 0.9.1 | 629 | 5600 | 16 | 37 | 2026-01-17 |

|

||||||

| 2 | [📊 Smart Infographic (AntV)](https://openwebui.com/posts/smart_infographic_ad6f0c7f) | action | 1.4.9 | 260 | 2514 | 14 | 20 | 2026-01-18 |

|

| 2 | [Smart Infographic](https://openwebui.com/posts/smart_infographic_ad6f0c7f) | action | 1.4.9 | 410 | 3621 | 18 | 27 | 2026-01-25 |

|

||||||

| 3 | [Export to Excel](https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d) | action | 0.3.7 | 209 | 800 | 4 | 5 | 2026-01-07 |

|

| 3 | [Export to Excel](https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d) | action | 0.3.7 | 255 | 1039 | 4 | 6 | 2026-01-07 |

|

||||||

| 4 | [Async Context Compression](https://openwebui.com/posts/async_context_compression_b1655bc8) | action | 1.1.3 | 180 | 1975 | 9 | 19 | 2026-01-17 |

|

| 4 | [Export to Word (Enhanced)](https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315) | action | 0.4.3 | 229 | 1839 | 8 | 21 | 2026-01-17 |

|

||||||

| 5 | [Export to Word (Enhanced)](https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315) | action | 0.4.3 | 158 | 1377 | 8 | 16 | 2026-01-17 |

|

| 5 | [Async Context Compression](https://openwebui.com/posts/async_context_compression_b1655bc8) | action | 1.2.2 | 227 | 2461 | 9 | 27 | 2026-01-21 |

|

||||||

| 6 | [Flash Card](https://openwebui.com/posts/flash_card_65a2ea8f) | action | 0.2.4 | 138 | 2329 | 10 | 10 | 2026-01-17 |

|

| 6 | [Flash Card](https://openwebui.com/posts/flash_card_65a2ea8f) | action | 0.2.4 | 165 | 2674 | 11 | 13 | 2026-01-17 |

|

||||||

| 7 | [Markdown Normalizer](https://openwebui.com/posts/markdown_normalizer_baaa8732) | action | 1.2.3 | 84 | 2100 | 10 | 17 | 2026-01-17 |

|

| 7 | [Markdown Normalizer](https://openwebui.com/posts/markdown_normalizer_baaa8732) | action | 1.2.4 | 148 | 2762 | 10 | 20 | 2026-01-19 |

|

||||||

| 8 | [Deep Dive](https://openwebui.com/posts/deep_dive_c0b846e4) | action | 1.0.0 | 68 | 663 | 4 | 6 | 2026-01-08 |

|

| 8 | [Deep Dive](https://openwebui.com/posts/deep_dive_c0b846e4) | action | 1.0.0 | 91 | 839 | 4 | 8 | 2026-01-08 |

|

||||||

| 9 | [导出为 Word (增强版)](https://openwebui.com/posts/导出为_word_支持公式流程图表格和代码块_8a6306c0) | action | 0.4.3 | 63 | 1305 | 11 | 3 | 2026-01-17 |

|

| 9 | [导出为 Word (增强版)](https://openwebui.com/posts/导出为_word_支持公式流程图表格和代码块_8a6306c0) | action | 0.4.3 | 87 | 1614 | 11 | 4 | 2026-01-17 |

|

||||||

| 10 | [📊 智能信息图 (AntV Infographic)](https://openwebui.com/posts/智能信息图_e04a48ff) | action | 1.4.9 | 42 | 683 | 6 | 0 | 2026-01-17 |

|

| 10 | [📊 智能信息图 (AntV Infographic)](https://openwebui.com/posts/智能信息图_e04a48ff) | action | 1.4.9 | 46 | 781 | 6 | 0 | 2026-01-17 |

|

||||||

| 11 | [思维导图](https://openwebui.com/posts/智能生成交互式思维导图帮助用户可视化知识_8d4b097b) | action | 0.9.1 | 22 | 398 | 3 | 1 | 2026-01-17 |

|

| 11 | [思维导图](https://openwebui.com/posts/智能生成交互式思维导图帮助用户可视化知识_8d4b097b) | action | 0.9.1 | 27 | 447 | 4 | 1 | 2026-01-17 |

|

||||||

| 12 | [闪记卡 (Flash Card)](https://openwebui.com/posts/闪记卡生成插件_4a31eac3) | action | 0.2.4 | 16 | 443 | 5 | 1 | 2026-01-17 |

|

| 12 | [📂 Folder Memory – Auto-Evolving Project Context](https://openwebui.com/posts/folder_memory_auto_evolving_project_context_4a9875b2) | filter | 0.1.0 | 26 | 725 | 3 | 4 | 2026-01-20 |

|

||||||

| 13 | [异步上下文压缩](https://openwebui.com/posts/异步上下文压缩_5c0617cb) | action | 1.1.3 | 14 | 351 | 5 | 1 | 2026-01-17 |

|

| 13 | [异步上下文压缩](https://openwebui.com/posts/异步上下文压缩_5c0617cb) | action | 1.2.2 | 20 | 486 | 5 | 1 | 2026-01-21 |

|

||||||

| 14 | [精读](https://openwebui.com/posts/精读_99830b0f) | action | 1.0.0 | 6 | 259 | 3 | 1 | 2026-01-08 |

|

| 14 | [闪记卡 (Flash Card)](https://openwebui.com/posts/闪记卡生成插件_4a31eac3) | action | 0.2.4 | 19 | 507 | 6 | 1 | 2026-01-17 |

|

||||||

| 15 | [Review of Claude Haiku 4.5](https://openwebui.com/posts/review_of_claude_haiku_45_41b0db39) | unknown | | 0 | 59 | 1 | 0 | 2026-01-14 |

|

| 15 | [精读](https://openwebui.com/posts/精读_99830b0f) | action | 1.0.0 | 9 | 306 | 3 | 1 | 2026-01-08 |

|

||||||

| 16 | [ 🛠️ Debug Open WebUI Plugins in Your Browser](https://openwebui.com/posts/debug_open_webui_plugins_in_your_browser_81bf7960) | unknown | | 0 | 1198 | 12 | 7 | 2026-01-10 |

|

| 16 | [GitHub Copilot Official SDK Pipe](https://openwebui.com/posts/github_copilot_official_sdk_pipe_ce96f7b4) | pipe | 0.1.1 | 0 | 8 | 1 | 0 | 2026-01-26 |

|

||||||

|

| 17 | [🚀 Open WebUI Prompt Plus: AI-Powered Prompt Manager](https://openwebui.com/posts/open_webui_prompt_plus_ai_powered_prompt_manager_s_15fa060e) | unknown | | 0 | 222 | 6 | 4 | 2026-01-25 |

|

||||||

|

| 18 | [Review of Claude Haiku 4.5](https://openwebui.com/posts/review_of_claude_haiku_45_41b0db39) | unknown | | 0 | 93 | 1 | 0 | 2026-01-14 |

|

||||||

|

| 19 | [ 🛠️ Debug Open WebUI Plugins in Your Browser](https://openwebui.com/posts/debug_open_webui_plugins_in_your_browser_81bf7960) | unknown | | 0 | 1270 | 12 | 8 | 2026-01-10 |

|

||||||

|

|||||||

@@ -1,40 +1,45 @@

|

|||||||

# 📊 OpenWebUI 社区统计报告

|

# 📊 OpenWebUI 社区统计报告

|

||||||

|

|

||||||

> 📅 更新时间: 2026-01-19 18:11

|

> 📅 更新时间: 2026-01-26 15:14

|

||||||

|

|

||||||

## 📈 总览

|

## 📈 总览

|

||||||

|

|

||||||

| 指标 | 数值 |

|

| 指标 | 数值 |

|

||||||

|------|------|

|

|------|------|

|

||||||

| 📝 发布数量 | 16 |

|

| 📝 发布数量 | 19 |

|

||||||

| ⬇️ 总下载量 | 1792 |

|

| ⬇️ 总下载量 | 2388 |

|

||||||

| 👁️ 总浏览量 | 21276 |

|

| 👁️ 总浏览量 | 27294 |

|

||||||

| 👍 总点赞数 | 120 |

|

| 👍 总点赞数 | 138 |

|

||||||

| 💾 总收藏数 | 135 |

|

| 💾 总收藏数 | 183 |

|

||||||

| 💬 总评论数 | 24 |

|

| 💬 总评论数 | 33 |

|

||||||

|

|

||||||

## 📂 按类型分类

|

## 📂 按类型分类

|

||||||

|

|

||||||

|

- **pipe**: 1

|

||||||

- **action**: 14

|

- **action**: 14

|

||||||

- **unknown**: 2

|

- **unknown**: 3

|

||||||

|

- **filter**: 1

|

||||||

|

|

||||||

## 📋 发布列表

|

## 📋 发布列表

|

||||||

|

|

||||||

| 排名 | 标题 | 类型 | 版本 | 下载 | 浏览 | 点赞 | 收藏 | 更新日期 |

|

| 排名 | 标题 | 类型 | 版本 | 下载 | 浏览 | 点赞 | 收藏 | 更新日期 |

|

||||||

|:---:|------|:---:|:---:|:---:|:---:|:---:|:---:|:---:|

|

|:---:|------|:---:|:---:|:---:|:---:|:---:|:---:|:---:|

|

||||||

| 1 | [Smart Mind Map](https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a) | action | 0.9.1 | 532 | 4822 | 15 | 28 | 2026-01-17 |

|

| 1 | [Smart Mind Map](https://openwebui.com/posts/turn_any_text_into_beautiful_mind_maps_3094c59a) | action | 0.9.1 | 629 | 5600 | 16 | 37 | 2026-01-17 |

|

||||||

| 2 | [📊 Smart Infographic (AntV)](https://openwebui.com/posts/smart_infographic_ad6f0c7f) | action | 1.4.9 | 260 | 2514 | 14 | 20 | 2026-01-18 |

|

| 2 | [Smart Infographic](https://openwebui.com/posts/smart_infographic_ad6f0c7f) | action | 1.4.9 | 410 | 3621 | 18 | 27 | 2026-01-25 |

|

||||||

| 3 | [Export to Excel](https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d) | action | 0.3.7 | 209 | 800 | 4 | 5 | 2026-01-07 |

|

| 3 | [Export to Excel](https://openwebui.com/posts/export_mulit_table_to_excel_244b8f9d) | action | 0.3.7 | 255 | 1039 | 4 | 6 | 2026-01-07 |

|

||||||

| 4 | [Async Context Compression](https://openwebui.com/posts/async_context_compression_b1655bc8) | action | 1.1.3 | 180 | 1975 | 9 | 19 | 2026-01-17 |

|

| 4 | [Export to Word (Enhanced)](https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315) | action | 0.4.3 | 229 | 1839 | 8 | 21 | 2026-01-17 |

|

||||||

| 5 | [Export to Word (Enhanced)](https://openwebui.com/posts/export_to_word_enhanced_formatting_fca6a315) | action | 0.4.3 | 158 | 1377 | 8 | 16 | 2026-01-17 |

|

| 5 | [Async Context Compression](https://openwebui.com/posts/async_context_compression_b1655bc8) | action | 1.2.2 | 227 | 2461 | 9 | 27 | 2026-01-21 |

|

||||||

| 6 | [Flash Card](https://openwebui.com/posts/flash_card_65a2ea8f) | action | 0.2.4 | 138 | 2329 | 10 | 10 | 2026-01-17 |

|

| 6 | [Flash Card](https://openwebui.com/posts/flash_card_65a2ea8f) | action | 0.2.4 | 165 | 2674 | 11 | 13 | 2026-01-17 |

|

||||||

| 7 | [Markdown Normalizer](https://openwebui.com/posts/markdown_normalizer_baaa8732) | action | 1.2.3 | 84 | 2100 | 10 | 17 | 2026-01-17 |

|

| 7 | [Markdown Normalizer](https://openwebui.com/posts/markdown_normalizer_baaa8732) | action | 1.2.4 | 148 | 2762 | 10 | 20 | 2026-01-19 |

|

||||||

| 8 | [Deep Dive](https://openwebui.com/posts/deep_dive_c0b846e4) | action | 1.0.0 | 68 | 663 | 4 | 6 | 2026-01-08 |

|

| 8 | [Deep Dive](https://openwebui.com/posts/deep_dive_c0b846e4) | action | 1.0.0 | 91 | 839 | 4 | 8 | 2026-01-08 |

|

||||||

| 9 | [导出为 Word (增强版)](https://openwebui.com/posts/导出为_word_支持公式流程图表格和代码块_8a6306c0) | action | 0.4.3 | 63 | 1305 | 11 | 3 | 2026-01-17 |

|

| 9 | [导出为 Word (增强版)](https://openwebui.com/posts/导出为_word_支持公式流程图表格和代码块_8a6306c0) | action | 0.4.3 | 87 | 1614 | 11 | 4 | 2026-01-17 |

|

||||||

| 10 | [📊 智能信息图 (AntV Infographic)](https://openwebui.com/posts/智能信息图_e04a48ff) | action | 1.4.9 | 42 | 683 | 6 | 0 | 2026-01-17 |

|

| 10 | [📊 智能信息图 (AntV Infographic)](https://openwebui.com/posts/智能信息图_e04a48ff) | action | 1.4.9 | 46 | 781 | 6 | 0 | 2026-01-17 |

|

||||||

| 11 | [思维导图](https://openwebui.com/posts/智能生成交互式思维导图帮助用户可视化知识_8d4b097b) | action | 0.9.1 | 22 | 398 | 3 | 1 | 2026-01-17 |

|

| 11 | [思维导图](https://openwebui.com/posts/智能生成交互式思维导图帮助用户可视化知识_8d4b097b) | action | 0.9.1 | 27 | 447 | 4 | 1 | 2026-01-17 |

|

||||||

| 12 | [闪记卡 (Flash Card)](https://openwebui.com/posts/闪记卡生成插件_4a31eac3) | action | 0.2.4 | 16 | 443 | 5 | 1 | 2026-01-17 |

|

| 12 | [📂 Folder Memory – Auto-Evolving Project Context](https://openwebui.com/posts/folder_memory_auto_evolving_project_context_4a9875b2) | filter | 0.1.0 | 26 | 725 | 3 | 4 | 2026-01-20 |

|

||||||

| 13 | [异步上下文压缩](https://openwebui.com/posts/异步上下文压缩_5c0617cb) | action | 1.1.3 | 14 | 351 | 5 | 1 | 2026-01-17 |

|

| 13 | [异步上下文压缩](https://openwebui.com/posts/异步上下文压缩_5c0617cb) | action | 1.2.2 | 20 | 486 | 5 | 1 | 2026-01-21 |

|

||||||

| 14 | [精读](https://openwebui.com/posts/精读_99830b0f) | action | 1.0.0 | 6 | 259 | 3 | 1 | 2026-01-08 |

|

| 14 | [闪记卡 (Flash Card)](https://openwebui.com/posts/闪记卡生成插件_4a31eac3) | action | 0.2.4 | 19 | 507 | 6 | 1 | 2026-01-17 |

|

||||||

| 15 | [Review of Claude Haiku 4.5](https://openwebui.com/posts/review_of_claude_haiku_45_41b0db39) | unknown | | 0 | 59 | 1 | 0 | 2026-01-14 |

|

| 15 | [精读](https://openwebui.com/posts/精读_99830b0f) | action | 1.0.0 | 9 | 306 | 3 | 1 | 2026-01-08 |

|

||||||

| 16 | [ 🛠️ Debug Open WebUI Plugins in Your Browser](https://openwebui.com/posts/debug_open_webui_plugins_in_your_browser_81bf7960) | unknown | | 0 | 1198 | 12 | 7 | 2026-01-10 |

|

| 16 | [GitHub Copilot Official SDK Pipe](https://openwebui.com/posts/github_copilot_official_sdk_pipe_ce96f7b4) | pipe | 0.1.1 | 0 | 8 | 1 | 0 | 2026-01-26 |

|

||||||

|

| 17 | [🚀 Open WebUI Prompt Plus: AI-Powered Prompt Manager](https://openwebui.com/posts/open_webui_prompt_plus_ai_powered_prompt_manager_s_15fa060e) | unknown | | 0 | 222 | 6 | 4 | 2026-01-25 |

|

||||||

|

| 18 | [Review of Claude Haiku 4.5](https://openwebui.com/posts/review_of_claude_haiku_45_41b0db39) | unknown | | 0 | 93 | 1 | 0 | 2026-01-14 |

|

||||||

|

| 19 | [ 🛠️ Debug Open WebUI Plugins in Your Browser](https://openwebui.com/posts/debug_open_webui_plugins_in_your_browser_81bf7960) | unknown | | 0 | 1270 | 12 | 8 | 2026-01-10 |

|

||||||

|

|||||||

@@ -1,7 +1,7 @@

|

|||||||

# Async Context Compression

|

# Async Context Compression

|

||||||

|

|

||||||

<span class="category-badge filter">Filter</span>

|

<span class="category-badge filter">Filter</span>

|

||||||

<span class="version-badge">v1.2.0</span>

|

<span class="version-badge">v1.2.2</span>

|

||||||

|

|

||||||

Reduces token consumption in long conversations through intelligent summarization while maintaining conversational coherence.

|

Reduces token consumption in long conversations through intelligent summarization while maintaining conversational coherence.

|

||||||

|

|

||||||

@@ -38,6 +38,8 @@ This is especially useful for:

|

|||||||

- :material-format-align-justify: **Structure-Aware Trimming**: Preserves document structure

|

- :material-format-align-justify: **Structure-Aware Trimming**: Preserves document structure

|

||||||

- :material-content-cut: **Native Tool Output Trimming**: Trims verbose tool outputs (Note: Non-native tool outputs are not fully injected into context)

|

- :material-content-cut: **Native Tool Output Trimming**: Trims verbose tool outputs (Note: Non-native tool outputs are not fully injected into context)

|

||||||

- :material-chart-bar: **Detailed Token Logging**: Granular token breakdown

|

- :material-chart-bar: **Detailed Token Logging**: Granular token breakdown

|

||||||

|

- :material-account-search: **Smart Model Matching**: Inherit config from base models

|

||||||

|

- :material-image-off: **Multimodal Support**: Images are preserved but tokens are **NOT** calculated

|

||||||

|

|

||||||

---

|

---

|

||||||

|

|

||||||

@@ -73,6 +75,7 @@ graph TD

|

|||||||

| `keep_first` | integer | `1` | Always keep the first N messages |

|

| `keep_first` | integer | `1` | Always keep the first N messages |

|

||||||

| `keep_last` | integer | `6` | Always keep the last N messages |

|

| `keep_last` | integer | `6` | Always keep the last N messages |

|

||||||

| `summary_model` | string | `None` | Model to use for summarization |

|

| `summary_model` | string | `None` | Model to use for summarization |

|

||||||

|

| `summary_model_max_context` | integer | `0` | Max context tokens for summary model |

|

||||||

| `max_summary_tokens` | integer | `16384` | Maximum tokens for the summary |

|

| `max_summary_tokens` | integer | `16384` | Maximum tokens for the summary |

|

||||||

| `enable_tool_output_trimming` | boolean | `false` | Enable trimming of large tool outputs |

|

| `enable_tool_output_trimming` | boolean | `false` | Enable trimming of large tool outputs |

|

||||||

|

|

||||||

|

|||||||

@@ -1,7 +1,7 @@

|

|||||||

# Async Context Compression(异步上下文压缩)

|

# Async Context Compression(异步上下文压缩)

|

||||||

|

|

||||||

<span class="category-badge filter">Filter</span>

|

<span class="category-badge filter">Filter</span>

|

||||||

<span class="version-badge">v1.2.0</span>

|

<span class="version-badge">v1.2.2</span>

|

||||||

|

|

||||||

通过智能摘要减少长对话的 token 消耗,同时保持对话连贯。

|

通过智能摘要减少长对话的 token 消耗,同时保持对话连贯。

|

||||||

|

|

||||||

@@ -38,6 +38,8 @@ Async Context Compression 过滤器通过以下方式帮助管理长对话的 to

|

|||||||

- :material-format-align-justify: **结构感知裁剪**:保留文档结构的智能裁剪

|

- :material-format-align-justify: **结构感知裁剪**:保留文档结构的智能裁剪

|

||||||

- :material-content-cut: **原生工具输出裁剪**:自动裁剪冗长的工具输出(注意:非原生工具调用输出不会完整注入上下文)

|

- :material-content-cut: **原生工具输出裁剪**:自动裁剪冗长的工具输出(注意:非原生工具调用输出不会完整注入上下文)

|

||||||

- :material-chart-bar: **详细 Token 日志**:提供细粒度的 Token 统计

|

- :material-chart-bar: **详细 Token 日志**:提供细粒度的 Token 统计

|

||||||

|

- :material-account-search: **智能模型匹配**:自定义模型自动继承基础模型配置

|

||||||

|

- :material-image-off: **多模态支持**:图片内容保留但 Token **不参与计算**

|

||||||

|

|

||||||

---

|

---

|

||||||

|

|

||||||

@@ -73,6 +75,7 @@ graph TD

|

|||||||

| `keep_first` | integer | `1` | 始终保留的前 N 条消息 |

|

| `keep_first` | integer | `1` | 始终保留的前 N 条消息 |

|

||||||

| `keep_last` | integer | `6` | 始终保留的后 N 条消息 |

|

| `keep_last` | integer | `6` | 始终保留的后 N 条消息 |

|

||||||

| `summary_model` | string | `None` | 用于摘要的模型 |

|

| `summary_model` | string | `None` | 用于摘要的模型 |

|

||||||

|

| `summary_model_max_context` | integer | `0` | 摘要模型的最大上下文 Token 数 |

|

||||||

| `max_summary_tokens` | integer | `16384` | 摘要的最大 token 数 |

|

| `max_summary_tokens` | integer | `16384` | 摘要的最大 token 数 |

|

||||||

| `enable_tool_output_trimming` | boolean | `false` | 启用长工具输出裁剪 |

|

| `enable_tool_output_trimming` | boolean | `false` | 启用长工具输出裁剪 |

|

||||||

|

|

||||||

|

|||||||

57

docs/plugins/filters/folder-memory.md

Normal file

57

docs/plugins/filters/folder-memory.md

Normal file

@@ -0,0 +1,57 @@

|

|||||||

|

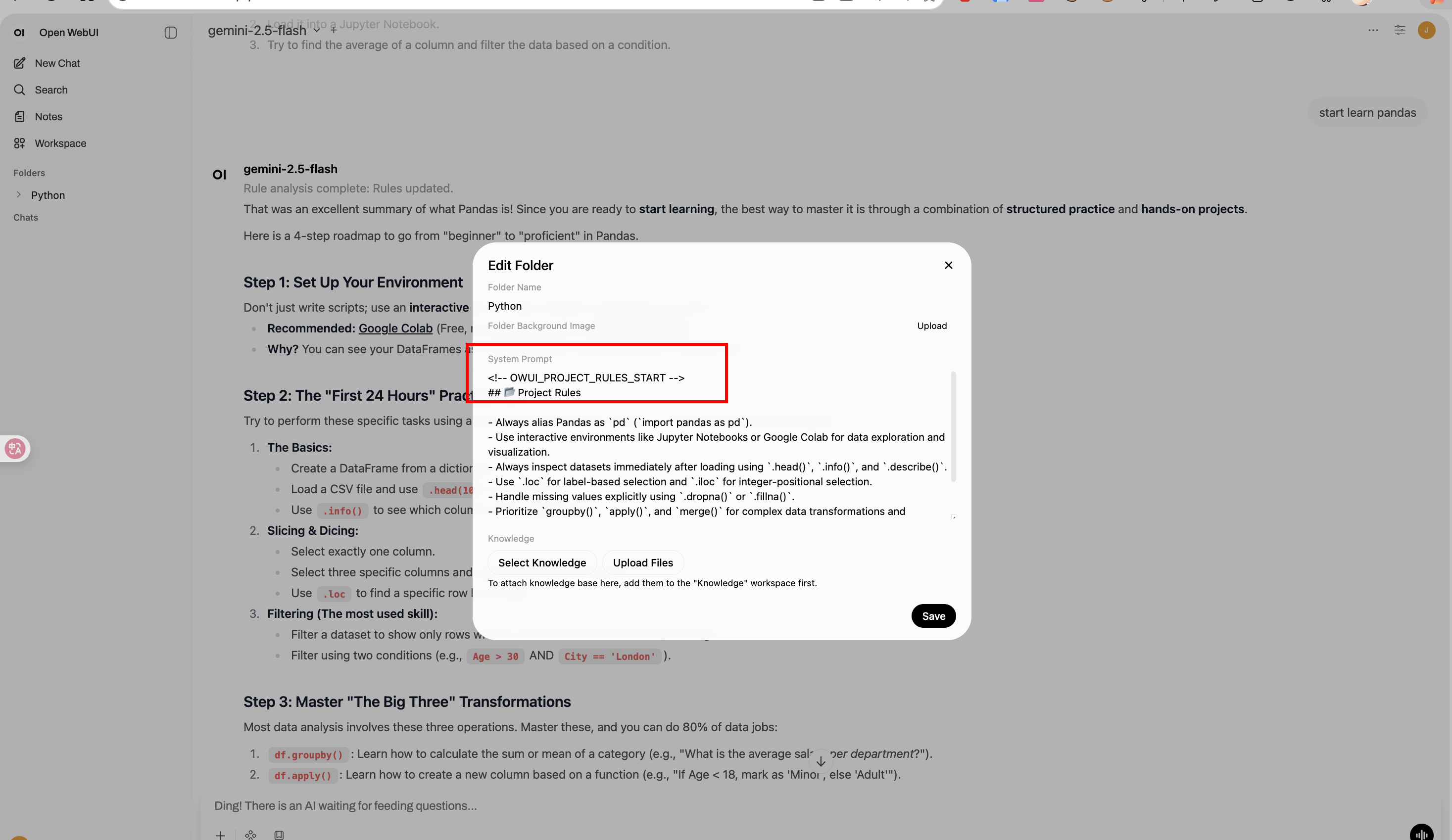

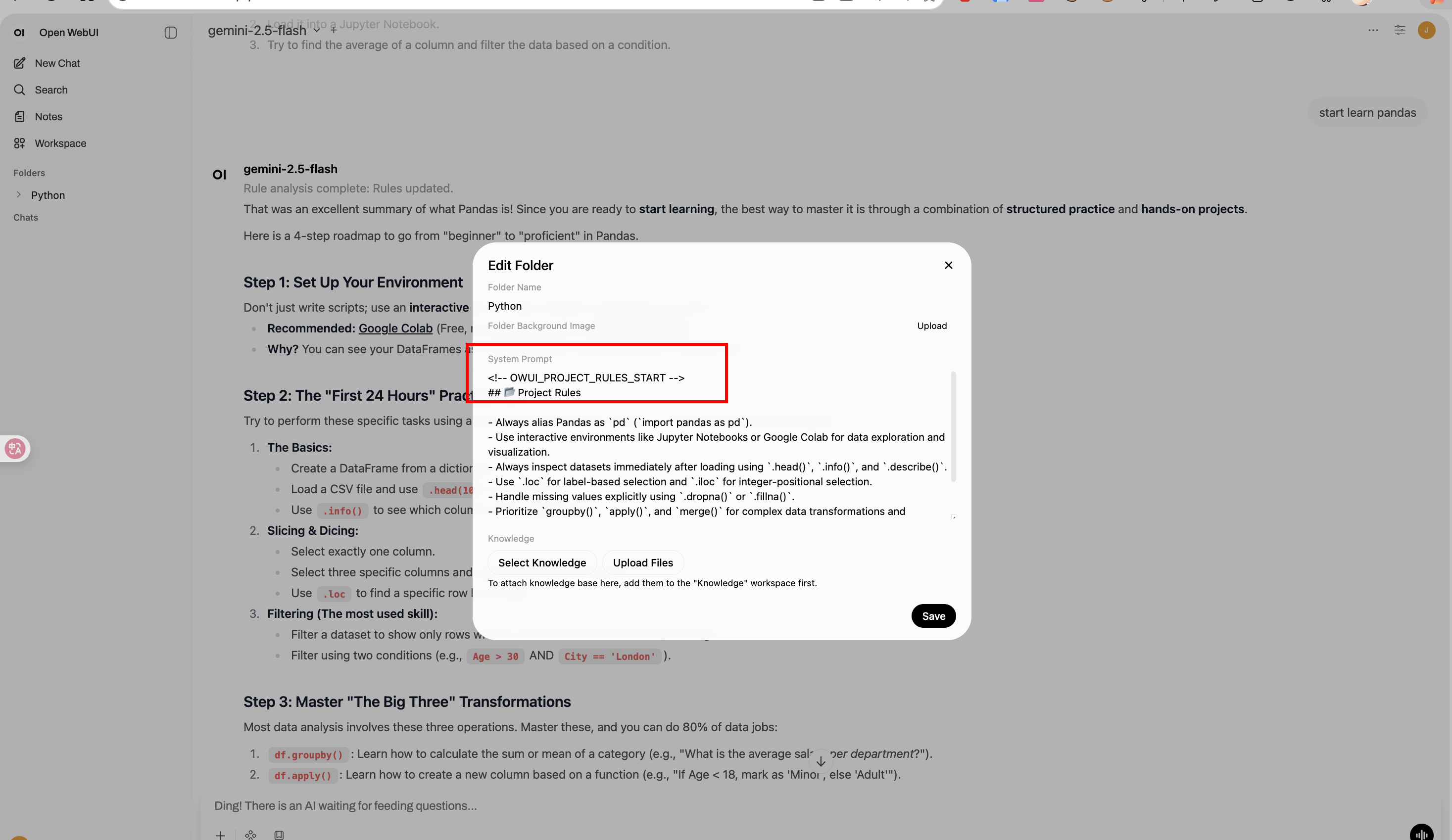

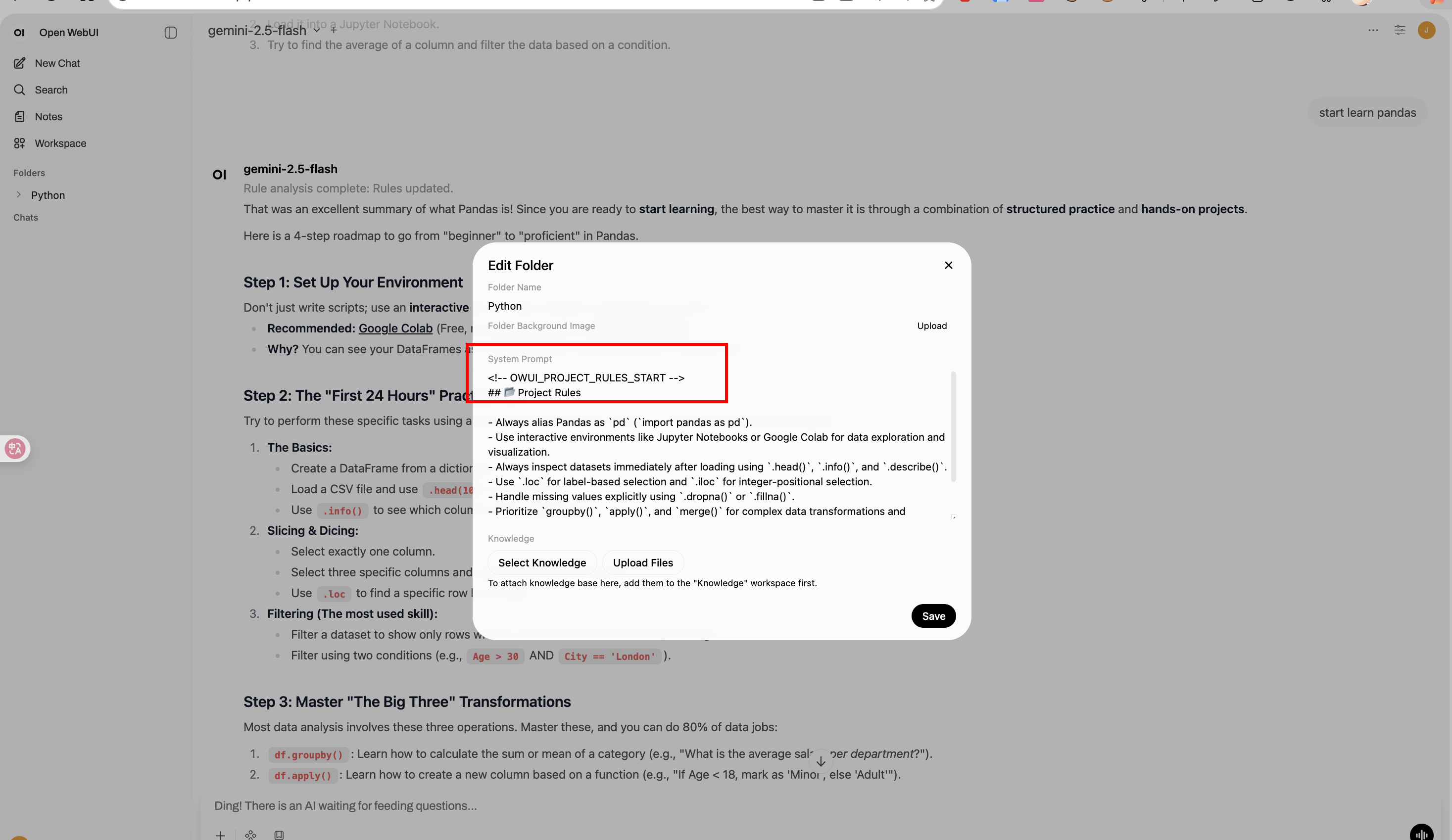

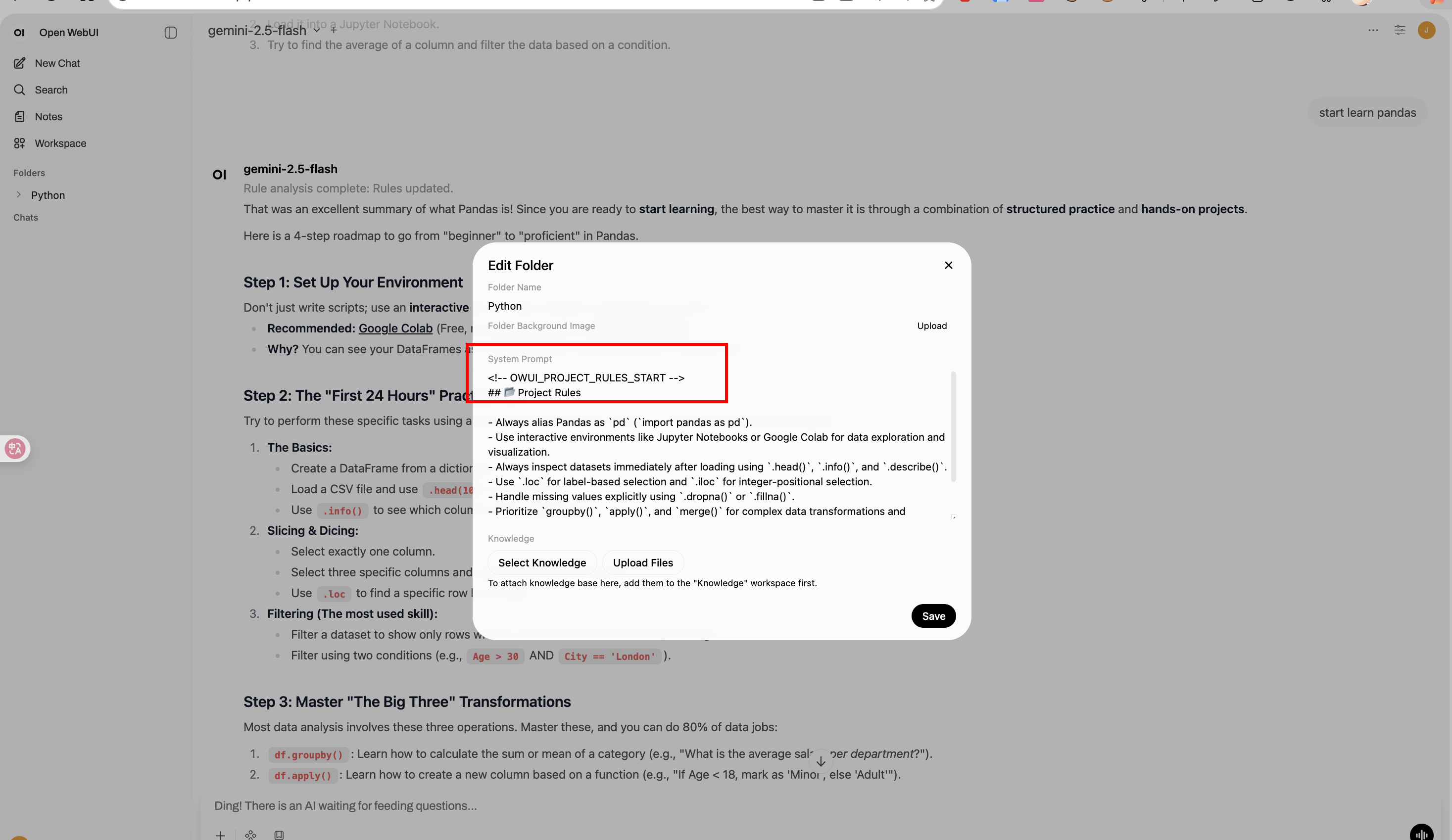

# Folder Memory

|

||||||

|

|

||||||

|

**Author:** [Fu-Jie](https://github.com/Fu-Jie/awesome-openwebui) | **Version:** 0.1.0 | **Project:** [Awesome OpenWebUI](https://github.com/Fu-Jie/awesome-openwebui) | **License:** MIT

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

### 📌 What's new in 0.1.0

|

||||||

|

- **Initial Release**: Automated "Project Rules" management for OpenWebUI folders.

|

||||||

|

- **Folder-Level Persistence**: Automatically updates folder system prompts with extracted rules.

|

||||||

|

- **Optimized Performance**: Runs asynchronously and supports `PRIORITY` configuration for seamless integration with other filters.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

**Folder Memory** is an intelligent context filter plugin for OpenWebUI. It automatically extracts consistent "Project Rules" from ongoing conversations within a folder and injects them back into the folder's system prompt.

|

||||||

|

|

||||||

|

This ensures that all future conversations within that folder share the same evolved context and rules, without manual updates.

|

||||||

|

|

||||||

|

## Features

|

||||||

|

|

||||||

|

- **Automatic Extraction**: Analyzes chat history every N messages to extract project rules.

|

||||||

|

- **Non-destructive Injection**: Updates only the specific "Project Rules" block in the system prompt, preserving other instructions.

|

||||||

|

- **Async Processing**: Runs in the background without blocking the user's chat experience.

|

||||||

|

- **ORM Integration**: Directly updates folder data using OpenWebUI's internal models for reliability.

|

||||||

|

|

||||||

|

## Prerequisites

|

||||||

|

|

||||||

|

- **Conversations must occur inside a folder.** This plugin only triggers when a chat belongs to a folder (i.e., you need to create a folder in OpenWebUI and start a conversation within it).

|

||||||

|

|

||||||

|

## Installation

|

||||||

|

|

||||||

|

1. Copy `folder_memory.py` to your OpenWebUI `plugins/filters/` directory (or upload via Admin UI).

|

||||||

|

2. Enable the filter in your **Settings** -> **Filters**.

|

||||||

|

3. (Optional) Configure the triggering threshold (default: every 10 messages).

|

||||||

|

|

||||||

|

## Configuration (Valves)

|

||||||

|

|

||||||

|

| Valve | Default | Description |

|

||||||

|

| :--- | :--- | :--- |

|

||||||

|

| `PRIORITY` | `20` | Priority level for the filter operations. |

|

||||||

|

| `MESSAGE_TRIGGER_COUNT` | `10` | The number of messages required to trigger a rule analysis. |

|

||||||

|

| `MODEL_ID` | `""` | The model used to generate rules. If empty, uses the current chat model. |

|

||||||

|

| `RULES_BLOCK_TITLE` | `## 📂 Project Rules` | The title displayed above the injected rules block. |

|

||||||

|

| `SHOW_DEBUG_LOG` | `False` | Show detailed debug logs in the browser console. |

|

||||||

|

| `UPDATE_ROOT_FOLDER` | `False` | If enabled, finds and updates the root folder rules instead of the current subfolder. |

|

||||||

|

|

||||||

|

## How It Works

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

1. **Trigger**: When a conversation reaches `MESSAGE_TRIGGER_COUNT` (e.g., 10, 20 messages).

|

||||||

|

2. **Analysis**: The plugin sends the recent conversation + existing rules to the LLM.

|

||||||

|

3. **Synthesis**: The LLM merges new insights with old rules, removing obsolete ones.

|

||||||

|

4. **Update**: The new rule set replaces the `<!-- OWUI_PROJECT_RULES_START -->` block in the folder's system prompt.

|

||||||

|

|

||||||

|

## Roadmap

|

||||||

|

|

||||||

|